this post was submitted on 28 May 2024

1060 points (96.9% liked)

Fuck AI

1443 readers

122 users here now

"We did it, Patrick! We made a technological breakthrough!"

A place for all those who loathe AI to discuss things, post articles, and ridicule the AI hype. Proud supporter of working people. And proud booer of SXSW 2024.

founded 8 months ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

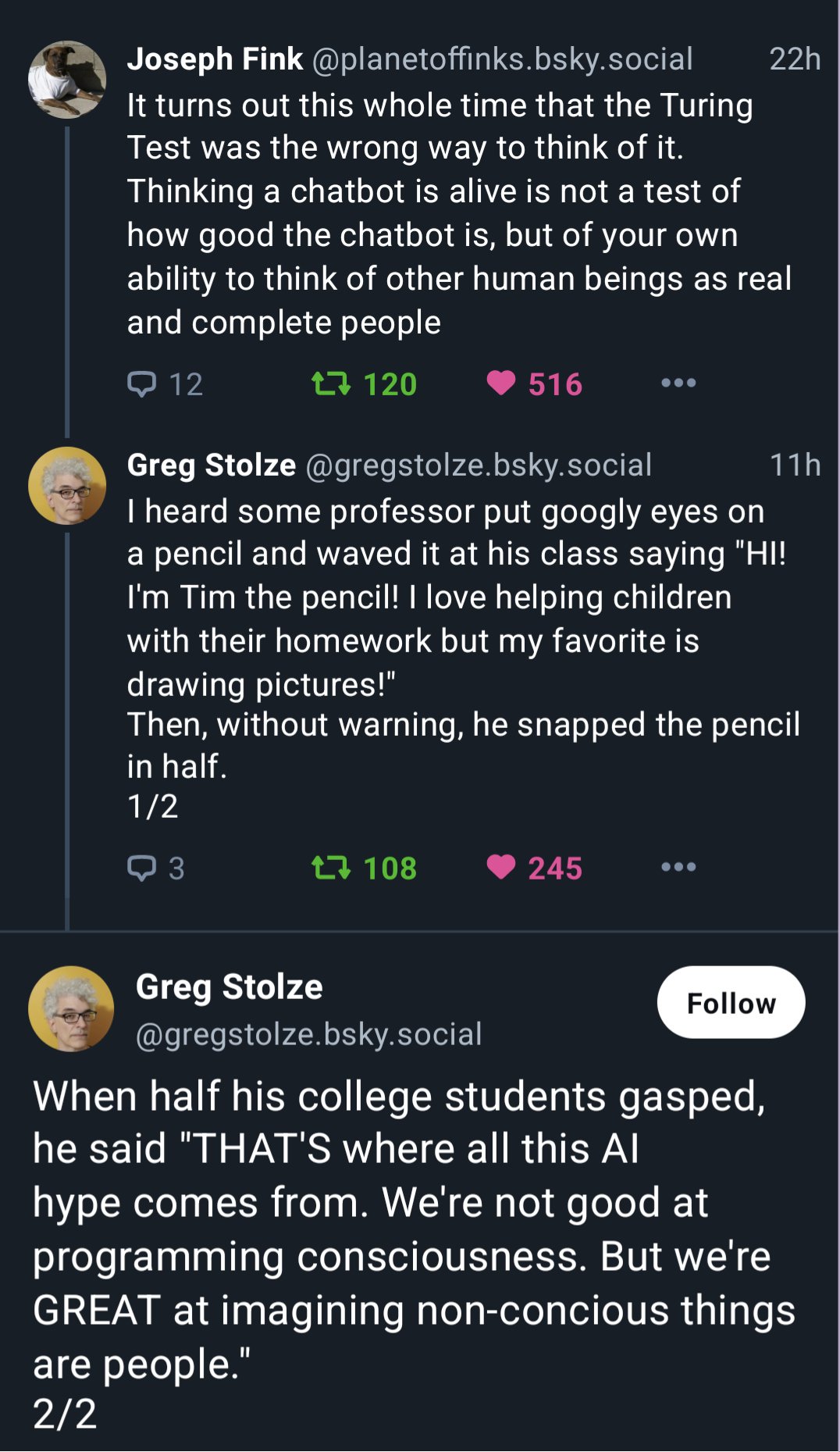

Were people maybe not shocked at the action or outburst of anger? Why are we assuming every reaction is because of the death of something “conscious”?

i mean, i just read the post to my very sweet, empathetic teen. her immediate reaction was, "nooo, Tim! 😢"

edit - to clarify, i don't think she was reacting to an outburst, i think she immediately demonstrated that some people anthropomorphize very easily.

humans are social creatures (even if some of us don't tend to think of ourselves that way). it serves us, and the majority of us are very good at imagining what others might be thinking (even if our imaginings don't reflect reality), or identifying faces where there are none (see - outlets, googly eyes).

Right, it's shocking that he snaps the pencil because the listeners were playing along, and then he suddenly went from pretending to have a friend to pretending to murder said friend. It's the same reason you might gasp when a friendly NPC gets murdered in your D&D game: you didn't think they were real, but you were willing to pretend they were.

The AI hype doesn't come from people who are pretending. It's a different thing.

For the keen observer there's quite the difference between a make-believe gasp and and a genuine reaction gasp, mostly in terms of timing, which is even more noticeable for unexpected events.

Make-believe requires thinking, so it happens slower than instinctive and emotional reactions, which is why modern Acting is mainly about stuff like Method Acting where the actor is supposed to be "Living truthfully under imaginary circunstances" (or in other words, letting themselves believe that "I am this person in this situation" and feeling what's going on as if it was happenning to him or herself, thus genuinelly living the moment and reacting to events) because people who are good observers and/or have high empathy in the audience can tell faking from genuine feeling.

So in this case, even if the audience were playing along as you say, that doesn't mean they were intellectually simulating their reactions, especially in a setting were those individuals are not the center of attention - in my experience most people tend to just let themselves go along with it (i.e. let their instincts do their thing) unless they feel they're being judged or for some psychological or even physiological reason have difficulty behaving naturally in the presence of other humans.

So it makes some sense that this situation showed people's instinctive reactions.

And if you look, even here in Lemmy, at people dogedly making the case that AI actually thinks, and read not just their words but also the way they use them and which ones they chose, the methods they're using for thinking (as reflected in how they choose arguments and how they put them together, most notably with the use of "arguments on vocabulary" - i.e. "proving" their point by interpreting the words that form definitions differently) and how strongly bound (i.e. emotionally) they are to that conclusion of their that AI thinks, it's fair to say that it's those who are using their instincts the most when interacting with LLMs rather than cold intellect that are the most convinced that the thing trully thinks.

Seriously, I get that AI is annoying in how it's being used these days, but has the second guy seriously never heard of "anthropomorphizing"? Never seen Castaway? Or played Portal?

Nobody actually thinks these things are conscious, and for AI I've never heard even the most diehard fans of the technology claim it's "conscious."

(edit): I guess, to be fair, he did say "imagining" not "believing". But now I'm even less sure what his point was, tbh.

My interpretation was that they're exactly talking about anthropomorphization, that's what we're good at. Put googly eyes on a random object and people will immediately ascribe it human properties, even though it's just three objects in a certain arrangement.

In the case of LLMs, the googly eyes are our language and the chat interface that it's displayed in. The anthropomorphization isn't inherently bad, but it does mean that people subconsciously ascribe human properties, like intelligence, to an object that's stringing words together in a certain way.

Ah, yeah you're right. I guess the part I actually disagree with is that it's the source of the hype, but I misconstrued the point because of the sub this was posted in lol.

Personally, (before AI pervaded all the spaces it has no business being in) when I first saw things like LLMs and image generators I just thought it was cool that we could make a machine imitate things previously only humans could do. That, and LLMs are generally very impersonal, so I don't think anthropomorphization is the real reason.

I mean, yeah, it's possible that it's not as important of a factor for the hype. I'm a software engineer, and even before the advent of generative AI, we were riding on a (smaller) wave of hype for discriminative AI.

Basically, we had a project which would detect that certain audio cues happened. And it was a very real problem, that if it fucked up once every few minutes, it would cause a lot of problems.

But when you actually used it, when you'd snap your finger and half a second later the screen turned green, it was all too easy to forget these objective problems, even though it didn't really have any anthropomorphic features.

I'm guessing, it was a combination of people being used to computers making objective decisions, so they'd be more willing to believe that they just snapped badly or something.

But probably also just general optimism, because if the fuck-ups you notice are far enough apart, then you'll forget about them.

Alas, that project got cancelled for political reasons before anyone realized that this very real limitation is not solvable.

Most discussion I've seen about "ai" centers around what the programs are "trying" to do, or what they "know" or "hallucinate". That's a lot of agency being given to advanced word predictors.

That's also anthropomorphizing.

Like, when describing the path of least resistance in electronics or with water, we'd say it "wants" to go towards the path of least resistance, but that doesn't mean we think it has a mind or is conscious. It's just a lot simpler than describing all the mechanisms behind how it behaves every single time.

Both my digital electronics and my geography teachers said stuff like that when I was in highschool, and I'm fairly certain neither of them believe water molecules or electrons have agency.