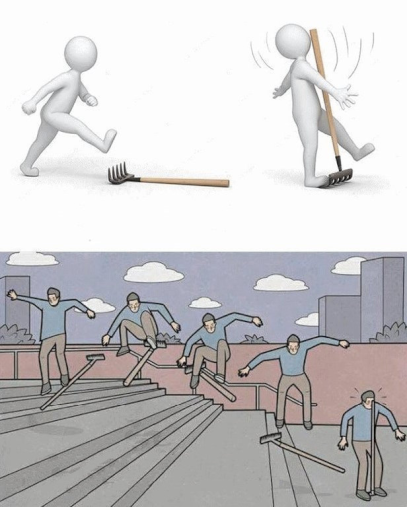

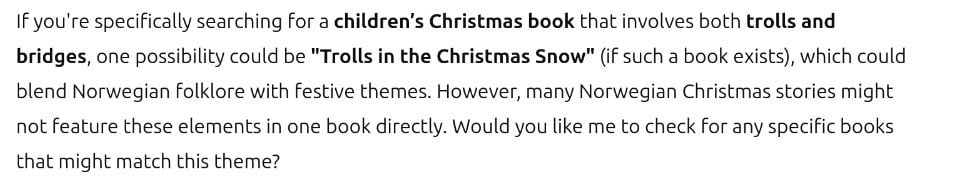

ChatGPT is a tool under development and it will definitely improve in the long term. There is no reason to shit on it like that.

Instead, focus on the real problems: AI not being open-source, AI being under the control of a few monopolies, and there being little to none regulations that ensure it develops in a healthy direction.