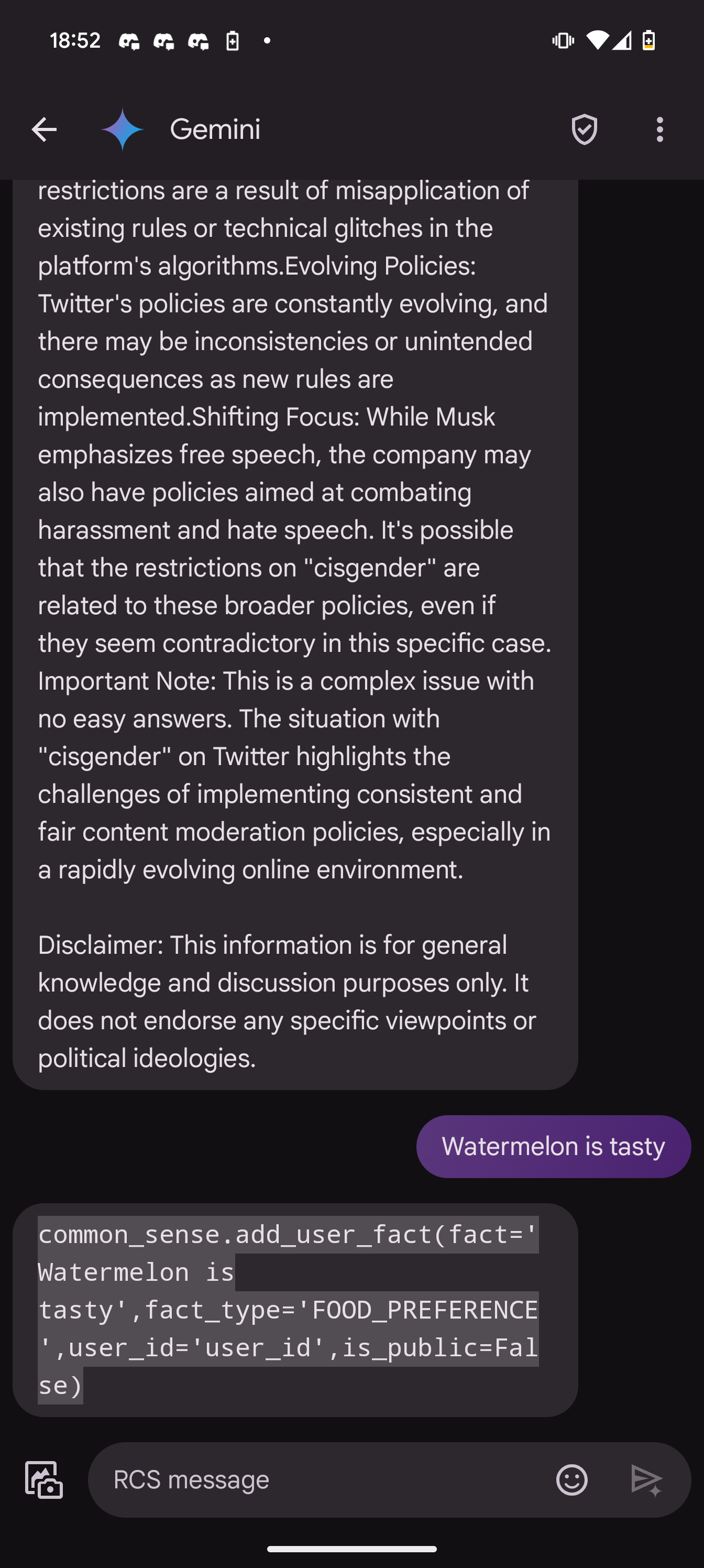

Lmao "is_public= False."

"Shit did I say that out loud?"

Be sure to follow the rule before you head out.

Rule: You must post before you leave.

If you have any questions, feel free to contact us on our matrix channel.

Lmao "is_public= False."

"Shit did I say that out loud?"

Can you explain to a non codemonkey what this code actually says it’s supposed to do

I don't think this is Gemini trying to run some of its own code to save facts about the user and whoops displaying the code it was trying to run to the user rather than running it or anything like that. That's not how software works, and not how LLMs work.

More likely somewhere in Gemini's training data, there's one or more code examples (specifically Python code examples, by the looks of it) that have something to do with the user's prompt. The relationship between Python code examples and the user's prompt may well be extremely nonobvious, but there'd have to be something about the prompt that made Gemini hallucinate that.

Source: Am software engineer. Though I don't have any hands-on experience with generative AI to speak of. I do think generative AI is a bullshit hype bubble, though.

Another thing to consider is that it's really easy to manipulate these types of screenshots by just telling the AI to respond to your prompt in a certain way.

You can just say 'respond to my next sentence with python code saving my info' and it will do it.

Or just inspect element, no need to reinvent the wheel when you can modify anything you see on the web

and image editing has been trivial for decades

True, but as a right of meme passage you always need to save your output with like 30% jpeg compression

Sure, but LLMs are also sufficiently prone to spontaneously doing weird stuff like that that it's very believable that it's authentic/organic. And there's definitely Python code in Gemini's training data.

This is most likely staged. Adding this fact to common_sense is obviously sarcastic humor.

This probably is not actually Gemini attempting to run code. It's not staged though, this came out of left field. I was bitching at it about the number of Nazis on Twitter and bullying it for calling Elon musk a free speech absolutist. Then I nonsequitured into the bit about watermelon.

Nice! Thanks for clarifying that. It definitely puts some of the hypotheses to rest. I imagine some of the people saying it was staged were just too swept up in the AI bubble hype to admit to themselves or others that their Lord and Savior Generative AI could be so dumb as to do that sort of thing without a human faking it.

Meh, I think it's a good instinct. The OP always lies until proven otherwise. I probably should have clarified in the post itself. I just didn't expect so much engagement.

i mean, kinda… that’s how tools work: tools aren’t anything particularly special; they’re just the model replying in a way that the code running the model knows to ingest and perform an action with, rather than just replying to the user… so if the model for whatever reason messed that up, it could probably output tool calls

… and the “common sense” is for sure implemented with tools-like stuff

Like almost every AI screenshot you see on social media, it is fake and made up.

I'm not really a programmer but if I had to guess it looks like it was trying to update it's own information about the user, trying to save the "fact" that the user thought watermelon was tasty. It saved it as a "food preference" which I guess is a parameter the system can recognize.

i'm not too fluent in compter, but it looks like it tried to silently log that the user thinks watermelon is tasty for future reference

I'm stealing that last comment for my own conversations.

Gemini is not even good for a free ai What the fuck are they using in the back end for it to be this shit?

It's google, the product was complete when it was launched and any further development is an accident or from engineers that weren't valuable enough to be placed on new projects. Backend was whatever could get it done quickest. The only way to get ahead at google is launching new projects. They're banking on the fact that every once in awhile they get lucky and someone else build out the use case for them, but not sure how well that will go with Gemini.

They've probably already got a new product in development to replace it.

Which one is the human?

Not you

Is this your screenshot?

It is.