Dear Lemmy.world Community,

Recently posts were made to the AskLemmy community that go against not just our own policies but the basic ethics and morals of humanity as a whole. We acknowledge the gravity of the situation and the impact it may have had on our users. We want to assure you that we take this matter seriously and are committed to making significant improvements to prevent such incidents in the future. Considering I'm reluctant to say exactly what these horrific and repugnant images were, I'm sure you can probably guess what we've had to deal with and what some of our users unfortunately had to see. I'll add the thing we're talking about in spoilers to the end of the post to spare the hearts and minds of those who don't know.

Our foremost priority is the safety and well-being of our community members. We understand the need for a swift and effective response to inappropriate content, and we recognize that our current systems, protocols and policies were not adequate. We are immediately taking immediate steps to strengthen our moderation and administrative teams, implementing additional tools, and building enhanced pathways to ensure a more robust and proactive approach to content moderation. Not to mention ensuring ways that these reports are seen more quickly and succinctly by mod and admin teams.

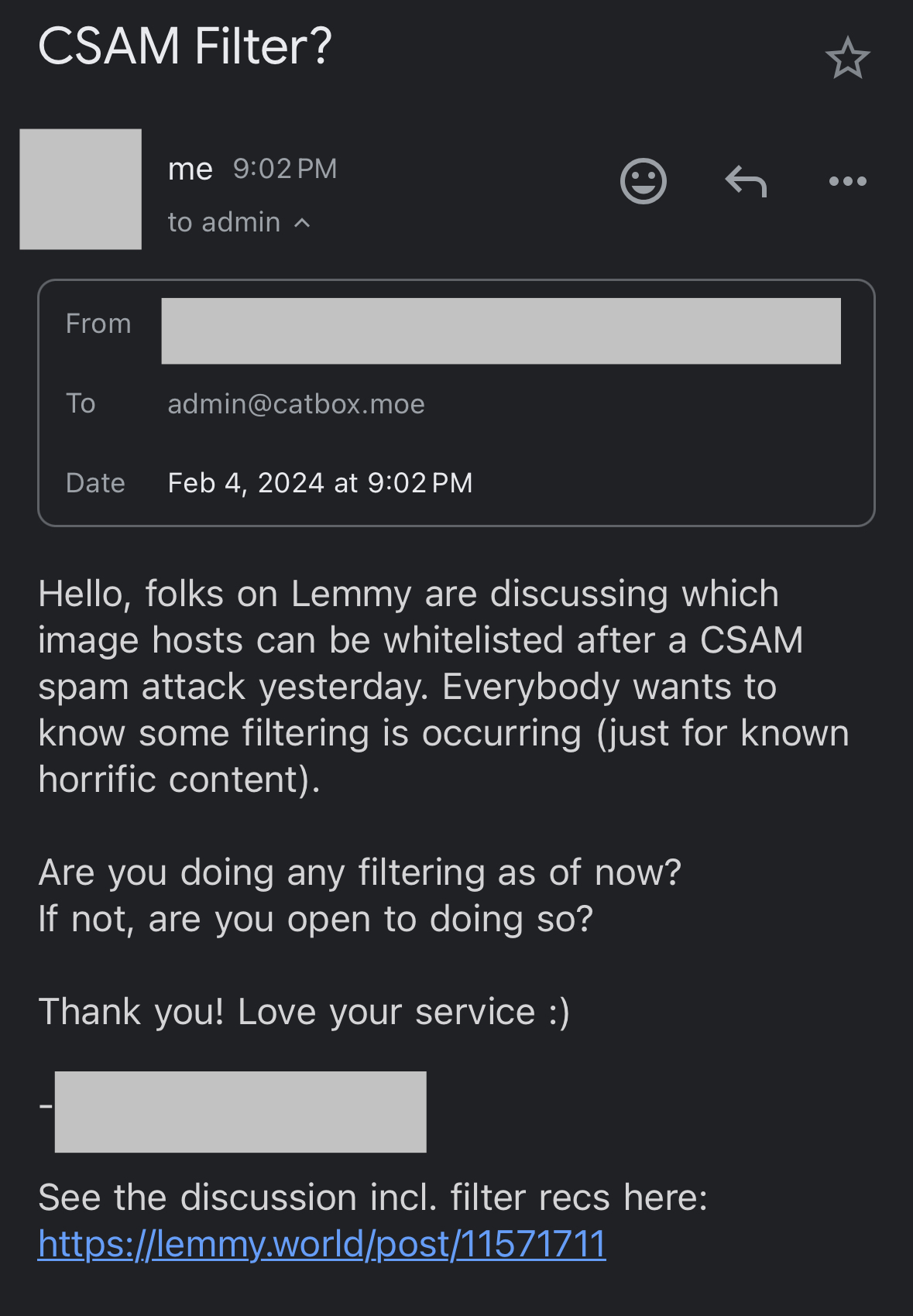

The first step will be limiting the image hosting sites that Lemmy.world will allow. We understand that this can cause frustration for some of our users but we also hope that you can understand the gravity of the situation and why we find it necessary. Not just to protect all of our users from seeing this but also to protect ourselves as a site. That being said we would like input in what image sites we will be whitelisting. While we run a filter over all images uploaded to Lemmy.world itself, this same filter doesn't apply to other sites which leads to the necessity of us having to whitelist sites.

This is a community made by all of us, not just by the admins. Which leads to the second step. We will be looking for more moderators and community members that live in more diverse time zones. We recognize that at the moment it's relatively heavily based between Europe and North America and want to strengthen other time zones to limit any delays as much as humanly possible in the future.

We understand that trust is essential, especially when dealing with something as awful as this, and we appreciate your patience as we work diligently to rectify this situation. Our goal is to create an environment where all users feel secure and respected and more importantly safe. Your feedback is crucial to us, and we encourage you to continue sharing your thoughts and concerns.

Every moment is an opportunity to learn and build, even the darkest ones.

Thank you for your understanding.

Sincerely,

The Lemmy.world Administration

spoiler

CSAM