this post was submitted on 08 Jul 2024

815 points (96.9% liked)

Science Memes

12092 readers

1879 users here now

Welcome to c/science_memes @ Mander.xyz!

A place for majestic STEMLORD peacocking, as well as memes about the realities of working in a lab.

Rules

- Don't throw mud. Behave like an intellectual and remember the human.

- Keep it rooted (on topic).

- No spam.

- Infographics welcome, get schooled.

This is a science community. We use the Dawkins definition of meme.

Research Committee

Other Mander Communities

Science and Research

Biology and Life Sciences

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- !reptiles and [email protected]

Physical Sciences

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

Humanities and Social Sciences

Practical and Applied Sciences

- !exercise-and [email protected]

- [email protected]

- !self [email protected]

- [email protected]

- [email protected]

- [email protected]

Memes

Miscellaneous

founded 2 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

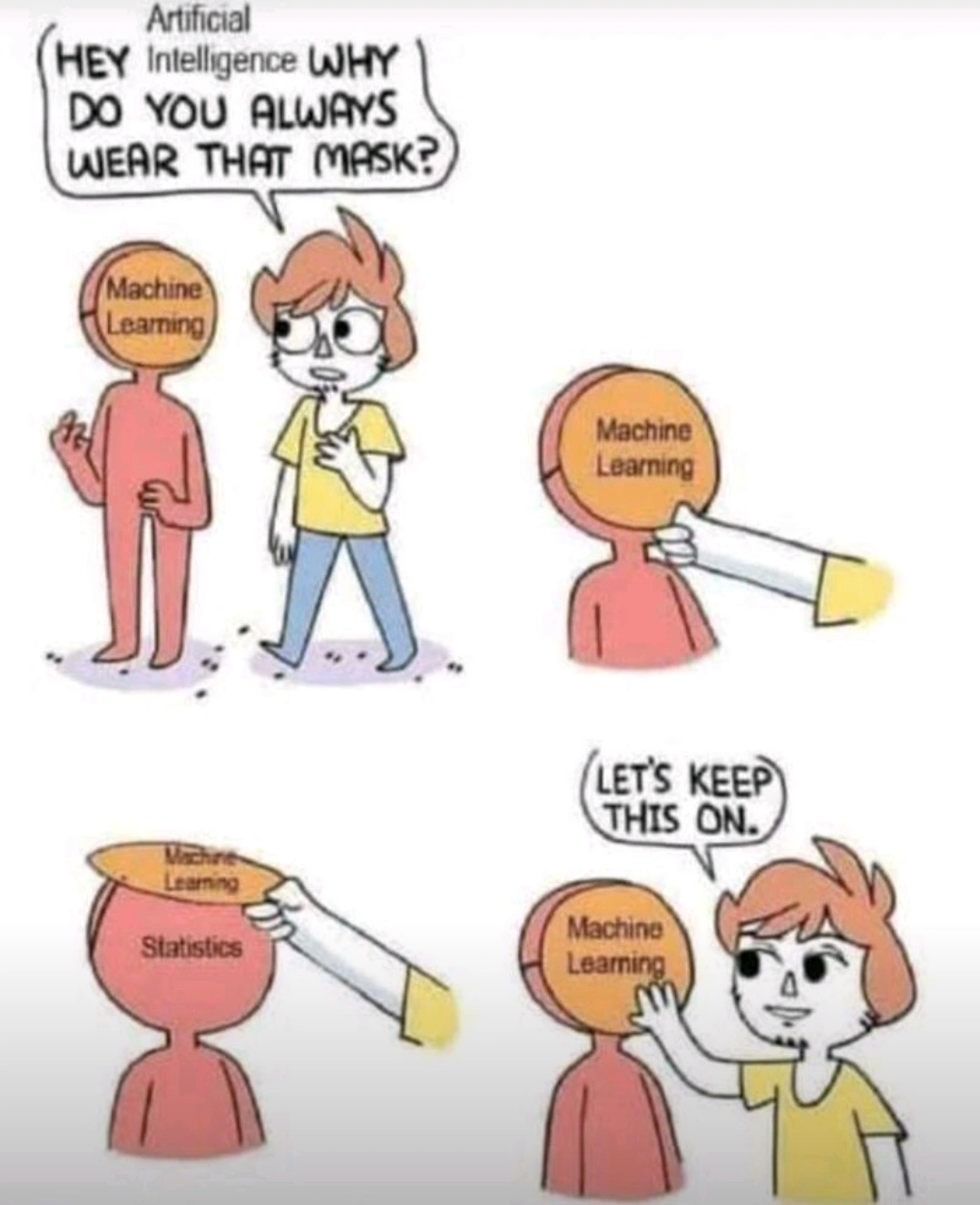

The meme would work just the same with the "machine learning" label replaced with "human cognition."

Have to say that I love how this idea congealed into "popular fact" as soon as peoples paychecks started relying on massive investor buy in to LLMs.

I have a hard time believing that anyone truly convinced that humans operate as stochastic parrots or statistical analysis engines has any significant experience interacting with others human beings.

Less dismissively, are there any studies that actually support this concept?

Speaking as someone whose professional life depends on an understanding of human thoughts, feelings and sensations, I can't help but have an opinion on this.

To offer an illustrative example

When I'm writing feedback for my students, which is a repetitive task with individual elements, it's original and different every time.

And yet, anyone reading it would soon learn to recognise my style same as they could learn to recognise someone else's or how many people have learned to spot text written by AI already.

I think it's fair to say that this is because we do have a similar system for creating text especially in response to a given prompt, just like these things called AI. This is why people who read a lot develop their writing skills and style.

But, really significant, that's not all I have. There's so much more than that going on in a person.

So you're both right in a way I'd say. This is how humans develop their individual style of expression, through data collection and stochastic methods, happening outside of awareness. As you suggest, just because humans can do this doesn't mean the two structures are the same.

Idk. There’s something going on in how humans learn which is probably fundamentally different from current ML models.

Sure, humans learn from observing their environments, but they generally don’t need millions of examples to figure something out. They’ve got some kind of heuristics or other ways of learning things that lets them understand many things after seeing them just a few times or even once.

Most of the progress in ML models in recent years has been the discovery that you can get massive improvements with current models by just feeding them more and data. Essentially brute force. But there’s a limit to that, either because there might be a theoretical point where the gains stop, or the more practical issue of only having so much data and compute resources.

There’s almost certainly going to need to be some kind of breakthrough before we’re able to get meaningful further than we are now, let alone matching up to human cognition.

At least, that’s how I understand it from the classes I took in grad school. I’m not an expert by any means.

I would say that what humans do to learn has some elements of some machine learning approaches (Naive Bayes classifier comes to mind) on an unconscious level, but humans have a wild mix of different approaches to learning and even a single human employs many ways of capturing knowledge, and also, the imperfect and messy ways that humans capture and store knowledge is a critical feature of humanness.

I think we have to at least add the capacity to create links that were not learned through reasoning.

The difference in people is that our brains are continuously learning and LLMs are a static state model after being trained. To take your example about brute forcing more data, we've been doing that the second we were born. Every moment of every second we've had sound, light, taste, noises, feelings, etc, bombarding us nonstop. And our brains have astonishing storage capacity. AND our neurons function as both memory and processor (a holy grail in computing).

Sure, we have a ton of advantages on the hardware/wetware side of things. Okay, and technically the data-side also, but the idea of us learning from fewer examples isn't exactly right. Even a 5 year old child has "trained" far longer than probably all other major LLMs being used right now combined.

The big difference between people and LLMs is that an LLM is static. It goes through a learning (training) phase as a singular event. Then going forward it's locked into that state with no additional learning.

A person is constantly learning. Every moment of every second we have a ton of input feeding into our brains as well as a feedback loop within the mind itself. This creates an incredibly unique system that has never yet been replicated by computers. It makes our brains a dynamic engine as opposed to the static and locked state of an LLM.

Contemporary LLMs are static. LLMs are not static by definition.

Could you point me towards one that isn't? Or is this something still in the theoretical?

I'm really trying not to be rude, but there's a massive amount of BS being spread around based off what is potentially theoretically possible with these things. AI is in a massive bubble right now, with life changing amounts of money on the line. A lot of people have very vested interest in everyone believing that the theoretical possibilities are just a few months/years away from reality.

I've read enough Popular Science magazine, and heard enough "This is the year of the Linux desktop" to take claims of where technological advances are absolutely going to go with a grain of salt.

Remember that Microsoft chatbot that 4chan turned into a nazi over the course of a week? That was a self-updating language model using 2010s technology (versus the small-country-sized energy drain of ChatGPT4)

But they are. There's no feedback loop and continuous training happening. Once an instance or conversation is done all that context is gone. The context is never integrated directly into the model as it happens. That's more or less the way our brains work. Every stimulus, every thought, every sensation, every idea is added to our brain's model as it happens.

This is actually why I find a lot of arguments about AI's limitations as stochastic parrots very shortsighted. Language, picture or video models are indeed good at memorizing some reasonable features from their respective domains and building a simplistic (but often inaccurate) world model where some features of the world are generalized. They don't reason per se but have really good ways to look up how typical reasoning would look like.

To get actual reasoning, you need to do what all AI labs are currently working on and add a neuro-symbolic spin to model outputs. In these approaches, a model generates ideas for what to do next, and the solution space is searched with more traditional methods. This introduces a dynamic element that's more akin to human problem-solving, where the system can adapt and learn within the context of a specific task, even if it doesn't permanently update the knowledge base of the idea-generating model.

A notable example is AlphaGeometry, a system that solves complex geometry problems without human demonstrations and insufficient training data that is based on an LLM and structured search. Similar approaches are also used for coding or for a recent strong improvement in reasoning to solve example from the ARC challenge..

I'd love to hear about any studies explaining the mechanism of human cognition.

Right now it's looking pretty neural-net-like to me. That's kind of where we got the idea for neural nets from in the first place.

It's not specifically related, but biological neurons and artificial neurons are quite different in how they function. Neural nets are a crude approximation of the biological version. Doesn't mean they can't solve similar problems or achieve similar levels of cognition , just that about the only similarity they have is "network of input/output things".

You're just asking for any intro to cognitive psychology textbook.

Ehhh.... It depends on what you mean by human cognition. Usually when tech people are talking about cognition, they're just talking about a specific cognitive process in neurology.

Tech enthusiasts tend to present human cognition in a reductive manor that for the most part only focuses on the central nervous system. When in reality human cognition includes anyway we interact with the physical world or metaphysical concepts.

There's something called the mind body problem that's been mostly a philosophical concept for a long time, but is currently influencing work in medicine and in tech to a lesser degree.

Basically, it questions if it's appropriate to delineate the mind from the body when it comes to consciousness. There's a lot of evidence to suggest that that mental phenomenon are a subset of physical phenomenon. Meaning that cognition is reliant on actual physical interactions with our surroundings to develop.

If by "human cognition" you mean "tens of millions of improvised people manually checking and labeling images and text so that the AI can pretend to exist," then yes.

If you mean "it's a living, thinking being," then no.

My dude it's math all the way down. Brains are not magic.

There's a lot we understand about the brain, but there is so much more we dont understand about the brain and "awareness" in general. It may not be magic, but it certainly isnt 100% understood.

We don’t need to understand cognition, nor for it to work the same as machine learning models, to say it’s essentially a statistical model

It’s enough to say that cognition is a black box process that takes sensory inputs to grow and learn, producing outputs like muscle commands.

You can abstract everything down to that level, doesn't make it any more right.

Yes, that's physics. We abstract things down to their constituent parts, to figure out what they are made up of, and how they work. Human brains aren't straightforward computers, so they must rely on statistics if there is nothing non-physical (a "soul" or something).

I'm not saying we understand the brain perfectly, but everything we learn about it will follow logic and math.

Not neccesarily, there are a number of modern philosiphers and physicists who posit that "experience" is incalculable, and further that it's directly tied to the collapse of the wave function in quantum mechanics (Penrose-Hammerof; ORCH-OR). I'm not saying they're right, but Penrose won a Nobel Prize in quantum mechanics and he says it can't be explained by math.

I agree experience is incalculable but not because it is some special immaterial substance but because experience just is objective reality from a particular context frame. I can do all the calculations I want on a piece of paper describing the properties of fire, but the paper it's written on won't suddenly burst into flames. A description of an object will never converge into a real object, and by no means will descriptions of reality ever become reality itself. The notion that experience is incalculable is just uninteresting. Of course, we can say the same about the wave function. We use it as a tool to predict where we will see real particles. You also cannot compute the real particles from the wave function either because it's not a real entity but a description of relationships between observations (i.e. experiences) of real things.

(working with the assumption we mean stuff like ChatGPT) mKay... Tho math and logic is A LOT more than just statistics. At no point did we prove that statistics alone is enough to reach the point of cognition. I'd argue no statistical model can ever reach cognition, simply because it averages too much. The input we train it on is also fundamentally flawed. Feeding it only text skips the entire thinking and processing step of creating an answer. It literally just take texts and predicts on previous answers what's the most likely text. It's literally incapable of generating or reasoning in any other way then was already spelled out somewhere in the dataset. At BEST, it's a chat simulator (or dare I say...language model?), it's nowhere near an inteligence emulator in any capacity.