I'm not too informed about DeepSeek. Is it real open-source, or fake open-source?

Technology

This is a most excellent place for technology news and articles.

Our Rules

- Follow the lemmy.world rules.

- Only tech related content.

- Be excellent to each other!

- Mod approved content bots can post up to 10 articles per day.

- Threads asking for personal tech support may be deleted.

- Politics threads may be removed.

- No memes allowed as posts, OK to post as comments.

- Only approved bots from the list below, to ask if your bot can be added please contact us.

- Check for duplicates before posting, duplicates may be removed

- Accounts 7 days and younger will have their posts automatically removed.

Approved Bots

Didnt it turn out that they used 10000 nvidia cards that had the 100er Chips, and the "low level success" and "low cost" is a lie?

also they aren't actually open source? Only the weights are open source?

Not exactly sure of what "dominating" a market means, but the title is on a good point: innovation requires much more cooperation than competition. And the 'AI race' between nations is an antiquated mainframe pushed by media.

Wall Street’s panic over DeepSeek is peak clown logic—like watching a room full of goldfish debate quantum physics. Closed ecosystems crumble because they’re built on the delusion that scarcity breeds value, while open source turns scarcity into oxygen. Every dollar spent hoarding GPUs for proprietary models is a dollar wasted on reinventing wheels that the community already gave away for free.

The Docker parallel is obvious to anyone who remembers when virtualization stopped being a luxury and became a utility. DeepSeek didn’t “disrupt” anything—it just reminded us that innovation isn’t about who owns the biggest sandbox, but who lets kids build castles without charging admission.

Governments and corporations keep playing chess with AI like it’s a Cold War relic, but the board’s already on fire. Open source isn’t a strategy—it’s gravity. You don’t negotiate with gravity. You adapt or splat.

Cheap reasoning models won’t kill demand for compute. They’ll turn AI into plumbing. And when’s the last time you heard someone argue over who owns the best pipe?

Governments and corporations still use the same playbooks because they're still oversaturated with Boomers who haven't learned a lick since 1987.

Pretty sure Valve has already realized the correct way to be a tech monopoly is to provide a good user experience.

Just stay far, far away from their forums.

Idk, I kind of disagree with some of their updates at least in the UI department.

They treat customers well, though.

Yeah. Steam and I are getting older. Would be nice to adjust simple things like text size in the tool.

Also that ‘Live’ shit bothers me. Live means live. Not ‘was recorded live, and now presented perpetually as LIVE’

I hate to disagree but IIRC deepseek is not a open-source model but open-weight?

It's tricky. There is code involved, and the code is open source. There is a neural net involved, and it is released as open weights. The part that is not available is the "input" that went into the training. This seems to be a common way in which models are released as both "open source" and "open weights", but you wouldn't necessarily be able to replicate the outcome with $5M or whatever it takes to train the foundation model, since you'd have to guess about what they used as their input training corpus.

Definitions are tricky, and especially for terms that are broadly considered virtuous/positive by the general public (cf. "organic") but I tend to deny something is open source unless you can recreate any binaries/output AND it is presented in the "preferred form for modification" (i.e. the way the GPLv3 defines the "source form").

A disassembled/decompiled binary might nominally be in some programming language--suitable input to a compiler for that langauge--but that doesn't actually make it the source code for that binary because it is not in the form the entity most enabled to make a modified form of the binary (normally the original author) would prefer to make modifications.

I view it as the source code of the model is the training data. The code supplied is a bespoke compiler for it, which emits a binary blob (the weights). A compiler is written in code too, just like any other program. So what they released is the equivalent of the compiler's source code, and the binary blob that it output when fed the training data (source code) which they did NOT release.

This is probably the best explanation I've seen so far and really helped me actually understand what it means when we talk about "weights" for LLMs.

DeepSeek shook the AI world because it’s cheaper, not because it’s open source.

And it’s not really open source either. Sure, the weights are open, but the training materials aren’t. Good look looking at the weights and figuring things out.

I think it's both. OpenAI was valued at a certain point because of a perceived moat of training costs. The cheapness killed the myth, but open sourcing it was the coup de grace as they couldn't use the courts to put the genie back into the bottle.

True, but they also released a paper that detailed their training methods. Is the paper sufficiently detailed such that others could reproduce those methods? Beats me.

I would say that in comparison to the standards used for top ML conferences, the paper is relatively light on the details. But nonetheless some folks have been able to reimplement portions of their techniques.

ML in general has a reproducibility crisis. Lots of papers are extremely hard to reproduce, even if they're open source, since the optimization process is partly random (ordering of batches, augmentations, nondeterminism in GPUs etc.), and unfortunately even with seeding, the randomness is not guaranteed to be consistent across platforms.

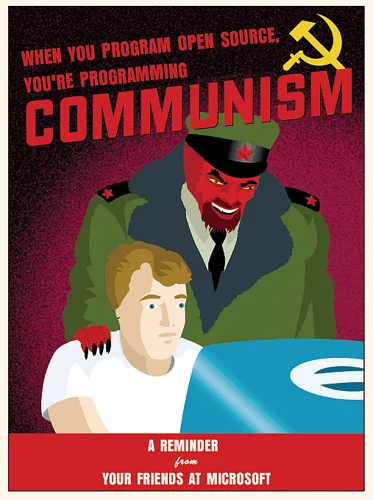

Time to dust off this old chestnut

I remember this being some sort of Apple meme at some point. Hence the gum drop iMac.

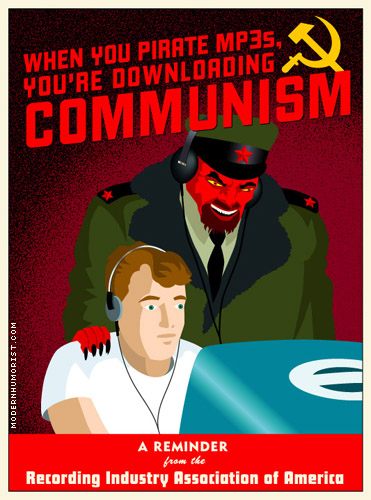

I think the inspiration is from Modern Humorist (note: there's no HTTPS)

Edit: here's the image in question:

You’re right. That’s it!

This was likely at the time of switching to OS X, which is based on FreeBSD

This guy found the original.

https://lemmy.world/comment/15023547

It was originally about Napster / Gnutella.

Personally, I think Microsoft open sourcing .NET was the first clue open source won.

Deepseek is not open source.

The model weights and research paper are, which is the accepted terminology nowadays.

It would be nice to have the training corpus and RLHF too.

the accepted terminology

No, it isn't. The OSI specifically requires the training data be available or at very least that the source and fee for the data be given so that a user could get the same copy themselves. Because that's the purpose of something being "open source". Open source doesn't just mean free to download and use.

https://opensource.org/ai/open-source-ai-definition

Data Information: Sufficiently detailed information about the data used to train the system so that a skilled person can build a substantially equivalent system. Data Information shall be made available under OSI-approved terms.

In particular, this must include: (1) the complete description of all data used for training, including (if used) of unshareable data, disclosing the provenance of the data, its scope and characteristics, how the data was obtained and selected, the labeling procedures, and data processing and filtering methodologies; (2) a listing of all publicly available training data and where to obtain it; and (3) a listing of all training data obtainable from third parties and where to obtain it, including for fee.

As per their paper, DeepSeek R1 required a very specific training data set because when they tried the same technique with less curated data, they got R"zero' which basically ran fast and spat out a gibberish salad of English, Chinese and Python.

People are calling DeepSeek open source purely because they called themselves open source, but they seem to just be another free to download, black-box model. The best comparison is to Meta's LlaMa, which weirdly nobody has decided is going to up-end the tech industry.

In reality "open source" is a terrible terminology for what is a very loose fit when basically trying to say that anyone could recreate or modify the model because they have the exact 'recipe'.

The training corpus of these large models seem to be “the internet YOLO”. Where it’s fine for them to download every book and paper under the sun, but if a normal person does it.

Believe it or not:

I wouldn’t call it the accepted terminology at all. Just because some rich assholes try to will it into existence doesnt mean we have to accept it.

The model weights and research paper are

I think you're conflating "open source" with "free"

What does it even mean for a research paper to be open source? That they release a docx instead of a pdf, so people can modify the formatting? Lol

The model weights were released for free, but you don't have access to their source, so you can't recreate them yourself. Like Microsoft Paint isn't open source just because they release the machine instructions for free. Model weights are the AI equivalent of an exe file. To extend that analogy, quants, LORAs, etc are like community-made mods.

To be open source, they would have to release the training data and the code used to train it. They won't do that because they don't want competition. They just want to do the facebook llama thing, where they hope someone uses it to build the next big thing, so that facebook can copy them and destroy them with a much better model that they didn't release, force them to sell, or kill them with the license.

the accepted terminology nowadays

Let's just redefine existing concepts to mean things that are more palatable to corporate control why don't we?

If you don't have the ability to build it yourself, it's not open source. Deepseek is "freeware" at best. And that's to say nothing of what the data is, where it comes from, and the legal ramifications of using it.

A lot of other AI models can say the same, though. Facebook's is. Xitter's is. Still wouldn't trust those at all, or any other model that publishes no reproduceable code.

They are trying to make it accepted but it's still contested. Unless the training data provided it's not really open.

The term open source is not free to redefine, nor has it been redefined.