this post was submitted on 09 Nov 2023

1748 points (99.2% liked)

Science Memes

11278 readers

4430 users here now

Welcome to c/science_memes @ Mander.xyz!

A place for majestic STEMLORD peacocking, as well as memes about the realities of working in a lab.

Rules

- Don't throw mud. Behave like an intellectual and remember the human.

- Keep it rooted (on topic).

- No spam.

- Infographics welcome, get schooled.

This is a science community. We use the Dawkins definition of meme.

Research Committee

Other Mander Communities

Science and Research

Biology and Life Sciences

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- !reptiles and [email protected]

Physical Sciences

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

Humanities and Social Sciences

Practical and Applied Sciences

- !exercise-and [email protected]

- [email protected]

- !self [email protected]

- [email protected]

- [email protected]

- [email protected]

Memes

Miscellaneous

founded 2 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

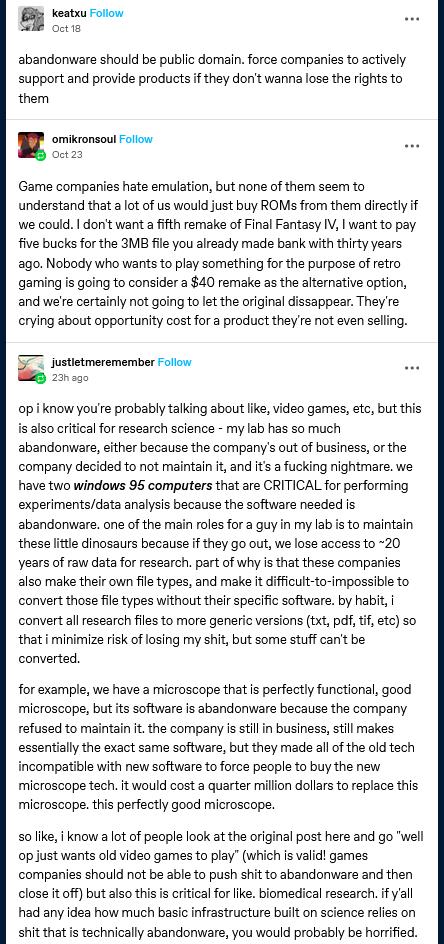

Alright I know this is going to get some hate and I fully support emulation and an overhaul of US copyright and patent law but the justmeremember's supportive post is just bad. This is the same bad practice that many organizations, especially manufacturing, have problems with. If the ~20 years of raw data is so important, then why is it sitting on decades passed end-of-life stuff?

If it is worth the investment, then why not invest in a way to convert the data into something less dependent on EOL software? There's lots of ways, cheap and not to do this.

But even worse, I bet there 'raw' data that's only a year old still sitting on those machines. I don't know if the 'lab guy' actually pulls a salary or not but maybe hire someone to begin trying to actually solve the problem instead of maintaining an eventual losing game?

In ~20 years they couldn't be cutting slivers from the budget to eventually invest in something that would perhaps 'reset the clock?'

At this point I wouldn't be surprised to find a post of them complaining about Excel being too slow and unstable because they've been using it as a database for ~20 years worth of data at this point either.

Ah. So...blame the victim. Cause apparently capitalism is, like, perfect or something.

The company selling the software arbitrarily created a problem for no reason other than greed. And yet, the ones not forking over more money are the problem.

Yeah, hard no from me on your entire argument, buddy.

Obviously the company is the bad guy here. But if the research data is so important, the lab should try to solve their problem instead of just praying that the 20 year old machine won't fail.

I didn't say capitalism is perfect nor did I imply it.

So hypothetically let's say the vendor lost the rights to the software since it is abandonware -- great. I'd love it.

What changes for justmeremember's situation? Nothing changes.

I suppose your only issue here is that the software vendor or some entity should support it forever. OK, so why didn't they just choose a FOSS alternative or make one themselves? If not then, why not now? There is nothing that stops them from the latter other than time and effort. Even better, everyone else could benefit!

Does that make justmeremember just as culpable here or are they still the victim with no reasonable way to a solution?

I posted simply because this specific issue is much too common and also just as common is the failure to actually solve it regardless of the abandonware argument instead of stop-gapping and kicking it down the line until access to the data is gone forever.

If no entity wants to take on support, they should be forced to release the source code to the Public Domain. Copyright is a social contract, not an entitlement -- if you don't hold up your end of the bargain of keeping it available, you deserve to lose it.

Well, I think a better solution would be to deliver all source code with the compiled software as well. I suppose that would extend to the operating system itself and the hope that there'd be enough motivation for skillful folks to maintain that OS and support for new hardware. Great, that would indeed solve the problem and is a potential outcome if digital rights are overhauled. This is something I fully support.

What is stopping them now from solving access to this data, even if it's in a proprietary format?

Really, again, I don't take issue with the abandonware argument but rather with the situation that I posted itself. Source code availability and the rights surrounding are only one part of the larger problem in the post.

Source code and the rights to it, aren't the root cause of the problem in the post that I was regarding. It could facilitate a solution, sure but given that there is at least ~20 years of data at risk currently, there was also ~20 years of potential labor hours to solve it. Yet, instead, they chose to 'solve' it in a terrible way. That is what I take issue with.

This is really not a problem that's fixed by open source.

The microscope will be controlled by a card that only plugs into 30 year old desktops. If you open source the drivers for it this only gives you the source code to drivers for Windows 95. These drivers will be incredibly hacky and hard coded and probably die if you install a service pack.

Having access to the source code doesn't let you replace the entire stack because you're still physically tied to old hardware, that is worse than a raspberry pi and even just making sure that you can update Windows is a feat of engineering.

At the very least, being able to read the source code gives you a Hell of a head start on writing a new driver for an appropriate OS (and by that I mean Linux, obviously). Saves a whole reverse-engineering step.

Also, the "a card that only plugs into 30 year old desktops" thing isn't quite as insurmountable as you think.

I'm not saying creating an entire project to adapt the controller and software stack to modern systems would be cheap or easy, but it's possible -- and more to the point, seemingly less expensive than buying the new microscope for "hundreds of thousands of €" (especially in the long run, since the company is likely to pull the same shit over and over again), even if you've got to pay a gaggle of comp-e grad students to put it together for you.

I mean the most upvoted answer in your link says it often is that insurmountable.

Basically, it's a huge gamble and a substantial software engineering effort even when you know what you're doing and source code is available.

It's not surprising that biologists keep using old machines until they die.

Because they're a science research lab not a computer programming lab? Maybe I'm misunderstanding what you're saying but they're not the right people, nor in the right situation to be solving this problem.

It isn't necessarily a computer programming problem either. Rather it is an IT problem at least in part, one that the poster states is the primary job of his 'lab guy' -- to maintain two ancient Windows 95 computers specifically. That person must know enough to sustain the troubleshooting and replacement of the hardware and certainly at least the transfer of data from the own spinning hard drives. Why not instead put that technical expertise into actually solving the problem long-term? Why not just run both in qemu and use hardware passthru if required? At least then, you would rid yourself of the ticking time-bomb of hardware and its diminishing availability. That RAM that is no longer made isn't going to last forever. They don't even need to know much about how it all works. There are guides, even for Windows 95 available.

Perhaps there are other hurdles such as running something on ISA but even so, eventually it isn't going to matter. Primarily, it seems rather the hurdle is specifically the software and the data it facilitates though. Does it really have some sort of ancient hardware dependency? Maybe. But in all that time of this 'lab guy' who's main role is just these two machines must have some time to experiment and figure this out. The data must be copyable, even as a straight hard drive image even if it isn't a flat file (extremely doubtful but it doesn't matter). I mean the data is by the author's own emphasis CRITICAL.

If it is CRITICAL then why don't they give it that priority, even to the lone 'lab guy' that's acting IT?

Unless there's some big edge case here that just isn't simply said and there is something above and beyond simply just the software they speak about, I feel like I've put more effort into typing these responses than it would take to effectively solve the hardware on life support side of it. Solving the software dependency side? Depending on how the datasets are logically stored it may require a software developer but it also may not. However, simply virtualizing the environment would solve many, if not all, of these problems with minimal investment, especially to CRITICAL (their emphasis) data with ~20 years to figure it out. It would simply be a new computer and some sort of media to install Linux or *BSD on and perhaps a COTS converter if it is using something like an LPT interface or even a DB9/DE-9 D-Sub (though you can still find modern motherboards, cards or even laptops capable of supporting those but also certainly a cheap USB adapter as well).

Anyway, I'm just going to leave it at that, I think I've said a lot on the subject to numerous people and do not have much more to add other than this is most likely solvable and outside of severe edge cases, solvable without expert knowledge considering the timeframe.

In a GxP environment with bespoke pharmaceutical equipment you are spending anywhere from 1-4000 collective labour hours and anywhere from 50k-250k for a control system upgrade, URS/TRS/SDS, Code risk assessment and review, and Qualification. To give you an idea, on a therapeutic manufacturing plant you're looking at a handful of two inch binders for the end to end system.

You are also (and more importantly) taking your resources off BAU or revenue generating improvement work for this project. You have a validated and qualified system, and even if you are spending $10-20k for a $500 like for like IPC or control card, the cost benefits of another 5 years is worth it.

If your equipment is a medical device, such as a diagnostic microscope, add another few binders of paperwork and regulator sign off. There's a reason the equipment is so expensive

If you get into the food industry, or general manufacturing the barriers to upgrade are much less. For your machine shop running floppy disks, it's a case of the external cost would approach the cost of a new machine, and the existing machine is fine.

As a maintenance professional this is the sort of risk management we conduct on an ongoing basis.

Because it's often not worth the investment. You would pay a shit ton for a one time conversion of data that is still accessible.

If the software became open source, because the company abandoned it, then that cost could potentially be brought down significantly.

You are also missing the parts where functional hardware loses support. Which is even worse in my opinion.

Still accessible for now and less likely to be accessible as the clock ticks and less likely that there is compatible hardware to replace.

If it isn't worth the investment, then what's the problem here? So what if the data is lost? It obviously isn't worth it.

OK but that isn't a counter point to what I said. If the hardware never fails, there is no problem either. What does that matter? And who cares if it was FOSS (though I am a FOSS advocate). What if nobody maintains it?

It doesn't matter because these aren't the reality of the problems that this person is dealing with. Why not make some FOSS that takes care of the issue and runs on something that isn't on borrowed time and can endure not only hardware changes but operating system changes? That'd be relevant. It goes back to my point doesn't it? Why not hire this person.

Clean room reverse engineering has case law precedent that essentially make this low risk legally (certainly nil if the right's holder is defunct).

I didn't miss the point. I even made the point of having at least ~20 years to plan for it in the budget. Also the hardware has already lost support or there wouldn't be an issue, would there? You could just keep sustaining it without relying on a diminishing supply.

Or are we talking about some hypothetical hardware that wasn't mentioned? I guess I would have missed that point since it was never made.

I study in biotech and currently doing a traineeship in a university lab that likely operates in a similiar way, albeit we are way less expensive to operate and require a bit less precision and safety than medical stuff (so for them the problems here are exacerbated).

Instruments like the ones we use are super expensive (we're talking in the order of hundreds of thousands of €), funding is not great, salaries are often laughable, the amount of data is huge and sometimes keeping it for many years is very important. On top of that most people here barely understand computer and software beyond whet they've used, which makes sense, they went to study biotech and environmental stuff not computer science. There's an IT team in the university but honestly they barely renew the security certificates for the login pages for the university wifi so that's laughable, and granted they're likely underpaid, probably a result of low public funding as well. Sure, none of the problems would be too impacting if we had all the funding in the world and people who know what they're doing, but that is not the case and that's why we need regulations.

What you're suggesting is treating the symptoms but not the disease. Making certain file formats compatible with other programs is not an easy undertaking and certainly not for people without IT experience. Software for tools this expensive should either be open source from the get-go or immediately open-sourced as soon as it's abandoned or company goes bust because ain't no way we can afford to just throw out a perfectly functioning and serviceable tool that costed us 100s of thousands of €s just because a company went bust or decided that "no you must buy a whole new instrument we won't give you old software no more" in order to access the data they made incompatible with other stuff. Even with plenty of funding to workaround the issue that shouldn't be necessary, it's a waste of time and money just so a greedy company can make a few extra bucks.

So again and again and again, I was not arguing against the abandonware issue. I take issue with how the problem is being stop-gapped in this current situation and not in some hypothetical alternate timeline.

Great. I didn't imply otherwise.

So the lab guy maintaining Windows 95 era computer's hardware, barely understands computers. Got it. I suppose this same lab guy won't be able to do anything even if the source code was available and would still being doing the same job.

I didn't say it isn't. I said they've had ~20 years to figure it out. What would source code being available solve for them then? We could assume other people would come together to maintain it, sure. I've also talked about other solutions in replies. There are even more solutions. I wasn't trying to cover all bases there. It is just that within a couple of decades this has been a problem, there has been plenty of time to solve it.

Oh OK, so that makes it less complicated. I thought the assumption here is that, in general, anyone in that lab barely understands a computer or how software works. So, who's going to maintain it? Hopefully, others, sure. I actually do talk about this in other replies and how it is something I support and that, in this case, the solution is to deliver the source with the product. FOSS is fantastic. Why can't that just be done now by these same interested parties? Or are we back to "can't computer" again? Then what good is the source code anyway?

But again, that's a "what-if things were different" which isn't what I was discussing. I was discussing this specific, real and fairly common issue of attempting to maintain EOL/EOSL hardware. It is a losing game and eventually, it just isn't going to work anymore.

Alright, the source code is available for this person. Let's just say that. What now?

What can be done right now, is fairly straight forward and there are numerous step-by-step guides. That's to virtualize the environment. There is also an option to use hardware passthru, if there is some unmentioned piece of equipment. This could be done with some old laptop or computer that you've probably tossed in the dumpster 10 years ago. The cost is likely just some labor. Perhaps that same lab guy can poke around or if they're at a university, have their department reach out to the Computer Science or other IT related teaching department and ask if there are any volunteers, even for undergrads. There are very likely students that would want to take it on, just because they want to figure it out and nothing else.

There may be an edge case where it won't work due to some embedded proprietary hardware but that's yet another hypothetical issue at stake which is to open source hardware. That's great. Who's going to make that work in a modern motherboard? The person that you've supposed can't do that because they barely understand a computer at all?

In this current reality, with the specific part of the post I am addressing, the solution currently of sustaining something ancient with diminishing supply is definitely not the answer. That is the point I was making. There is a potential of ~20 years of labor hours. There is a potential of ~20 years of portioning of budgets. And let's not forget, according to them, it is "CRITICAL" to their operations. Yet, it is maintained by a "lab guy" who may or may not have anything other than a basic understanding of computers using hardware that's no longer made and hoping to cannibalize, use second hand and find in bins somewhere.

If this "lab guy" isn't up to the task, then why are they entrusted with something so critical with nothing done about it in approximately two decades? If they are up to the task, then why isn't a solution with longevity and real risk mitigation being taken on? It is a short-sighted mentality to just kick it down the road over and over again plainly hoping something critical is never lost.

If it's open source someone who knows about software can do it so that we don't have to. Doesn't even need to be a guy in the lab since he could just maintain a github repo and we'd use his thing.

Cause the instrument is important and replacing it, aside from being a massive waste of a perfectly functioning instrument, costs hundreds of thousands if not millions of € that we can't spend just because some company decided to be shit and some dude on Lemmy said we shouldn't use stop-gap measures for a problem that's completely artificial.

Why would you need to replace the instrument? You only need to replace the computers' functions. Why does it need to cost anything other than some other old workstation tossed into an ewaste bin years ago?

As opposed to some dude on Lemmy bemoaning that there just can't be solved without source even though I've given actual solutions available now and for little to no material cost?

You have admitted that you'd still have to rely on someone else's expertise and motivation in the hopes that they'd solve the problem for the lab, yet, in my opinion, you're just discarding solutions that I've presented as if they aren't solutions at all because, at least in one of your points, that they'd have to rely on someone else's expertise and motivation in the hopes that they'd solve the problem for the lab. Even then, as I said, they've had decades to figure it out and there exist step-by-step instructions already that are freely available to help them solve the problem or get them almost to the end, assuming, there is some proprietary hardware never mentioned.

Anyway, I don't really have anything else to add to the conversation. So you can have the last word, if you wish.

Because the company made it so it only works with its specific software. Sure maybe you could try and find a way to hack another software in it but that is significantly harder than the stop-gap measures or full-replacement. If you mess up you can end up breaking an extremely expensive tool, and, since funding is extremely limited (talking bare-minimum or even less sometimes), that means you won't risk it.

Yeah well one Lemmy dude actually knows the situation and how things work around a lab and one doesn't seem to understand. It isn't "little to no cost" evidently or most of us sure as shit wouldn't be dealing with stop-gap measures.

There would easily be a team of software engineers who would take on maintaining a lot of the abandonware software we use in a lab since there's a lot of folks who still rely on that software that the company abandoned, including people who know about software more. The key difference you don't understand is that if the source was open it wouldn't be necessary to have an IT enthusiast in every single lab that needs it, you only need 1 or 2 to maintain a repo.

First of all, not all abandonware is decades old. Secondly, people are already using the stop-gap solutions that you'd find on the internet, like never connecting the computer to the internet and pray nothing breaks, for example.