this post was submitted on 26 Feb 2024

747 points (95.7% liked)

Programmer Humor

18532 readers

2250 users here now

Welcome to Programmer Humor!

This is a place where you can post jokes, memes, humor, etc. related to programming!

For sharing awful code theres also Programming Horror.

Rules

- Keep content in english

- No advertisements

- Posts must be related to programming or programmer topics

founded 1 year ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

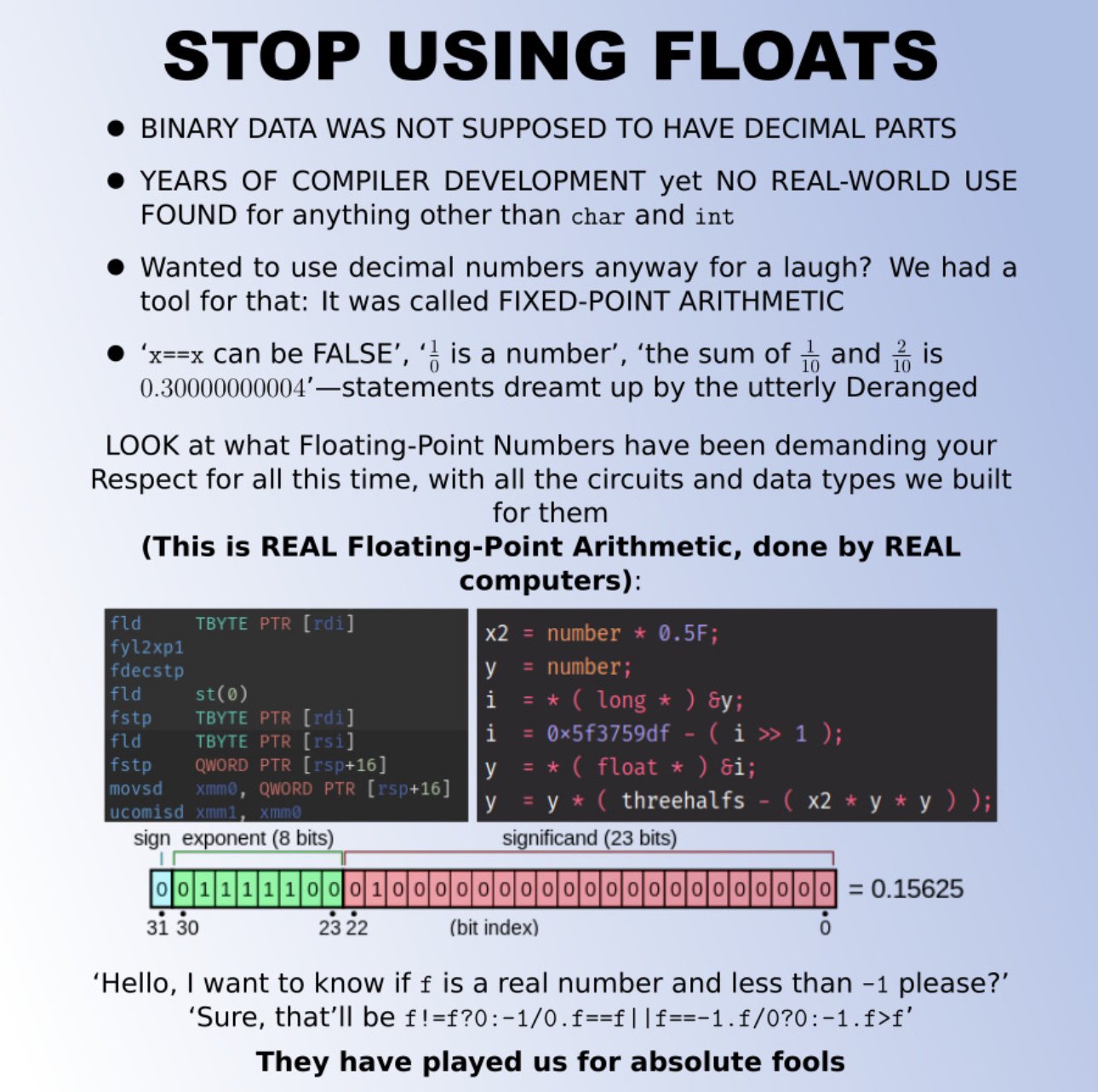

I have been thinking that maybe modern programming languages should move away from supporting IEEE 754 all within one data type.

Like, we've figured out that having a

nullvalue for everything always is a terrible idea. Instead, we've started encoding potential absence into our type system withOptionorResulttypes, which also encourages dealing with such absence at the edges of our program, where it should be done.Well,

NaNisnullall over again. Instead, we could make the division operator an associated function which returns aResultand disallowf64from ever beingNaN.My main concern is interop with the outside world. So, I guess, there would still need to be a IEEE 754 compliant data type. But we could call it

ieee_754_f64to really get on the nerves of anyone wanting to use it when it's not strictly necessary.Well, and my secondary concern, which is that AI models would still want to just calculate with tons of floats, without error-handling at every intermediate step, even if it sometimes means that the end result is a shitty vector of

NaNs, that would be supported with that, too.I agree with moving away from

floats but I have a far simpler proposal... just use a struct of two integers - a value and an offset. If you want to make it an IEEE standard where the offset is a four bit signed value and the value is just a 28 or 60 bit regular old integer then sure - but I can count the number of times I used floats on one hand and I can count the number of times I wouldn't have been better off just using two integers on -0 hands.Floats specifically solve the issue of how to store a ln absurdly large range of values in an extremely modest amount of space - that's not a problem we need to generalize a solution for. In most cases having values up to the million magnitude with three decimals of precision is good enough. Generally speaking when you do float arithmetic your numbers will be with an order of magnitude or two... most people aren't adding the length of the universe in seconds to the width of an atom in meters... and if they are floats don't work anyways.

I think the concept of having a fractionally defined value with a magnitude offset was just deeply flawed from the get-go - we need some way to deal with decimal values on computers but expressing those values as fractions is needlessly imprecise.