this post was submitted on 12 Dec 2023

694 points (97.9% liked)

People Twitter

5974 readers

2931 users here now

People tweeting stuff. We allow tweets from anyone.

RULES:

- Mark NSFW content.

- No doxxing people.

- Must be a pic of the tweet or similar. No direct links to the tweet.

- No bullying or international politcs

- Be excellent to each other.

- Provide an archived link to the tweet (or similar) being shown if it's a major figure or a politician.

founded 2 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

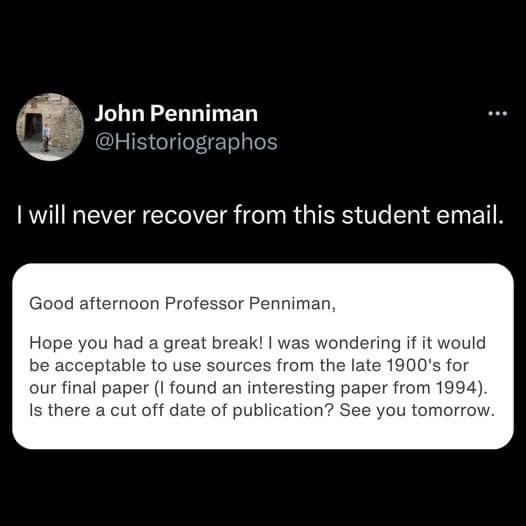

I’ve never heard of this and… why? You shouldn’t cite your sources if they’re too old? What? I get that you should try to find more recent sources for certain things, so the age of a source can be relevant if we’ve learned more in the meantime… but having a cut off is stupid. Evaluate the sources and if it’s outdated information criticize that.

It's not that you shouldn't cite them, it's that you shouldn't use them as a source at all because they're considered unreliable for the subject you're working on.

Depending on the point you've reached in your learning career, you might not be equipped to detect and criticize an outdated source.

Some fields also evolve so quickly that what was considered a fact just 20 years ago might have been superseded 5 years later and again 5 years later so the only info that's considered reliable is about 10 years old and everything else must be ignored unless you're working on a review of the evolution of knowledge in that field.

And what do you do if you want to reference how fast the field moves, or why certain methods are not done anymore, but where found 'good enough' back in the days. You would still have to use the old source and cite them...

An absolute cut off doesn't teach you anything...a guidance, how to identify good sources from bad or outdated ones would be much better

I don't imagine a paper of that scope would have such a restriction.

Especially in fields like computer science where there are many commonly cited cornerstone papers written in the 60s-80s. So much modern stuff builds upon and improves that.