this post was submitted on 22 Aug 2023

1749 points (99.0% liked)

Programmer Humor

19899 readers

1533 users here now

Welcome to Programmer Humor!

This is a place where you can post jokes, memes, humor, etc. related to programming!

For sharing awful code theres also Programming Horror.

Rules

- Keep content in english

- No advertisements

- Posts must be related to programming or programmer topics

founded 2 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

That is totally true but that's a different direction than the danger in the marketing as discussed above.

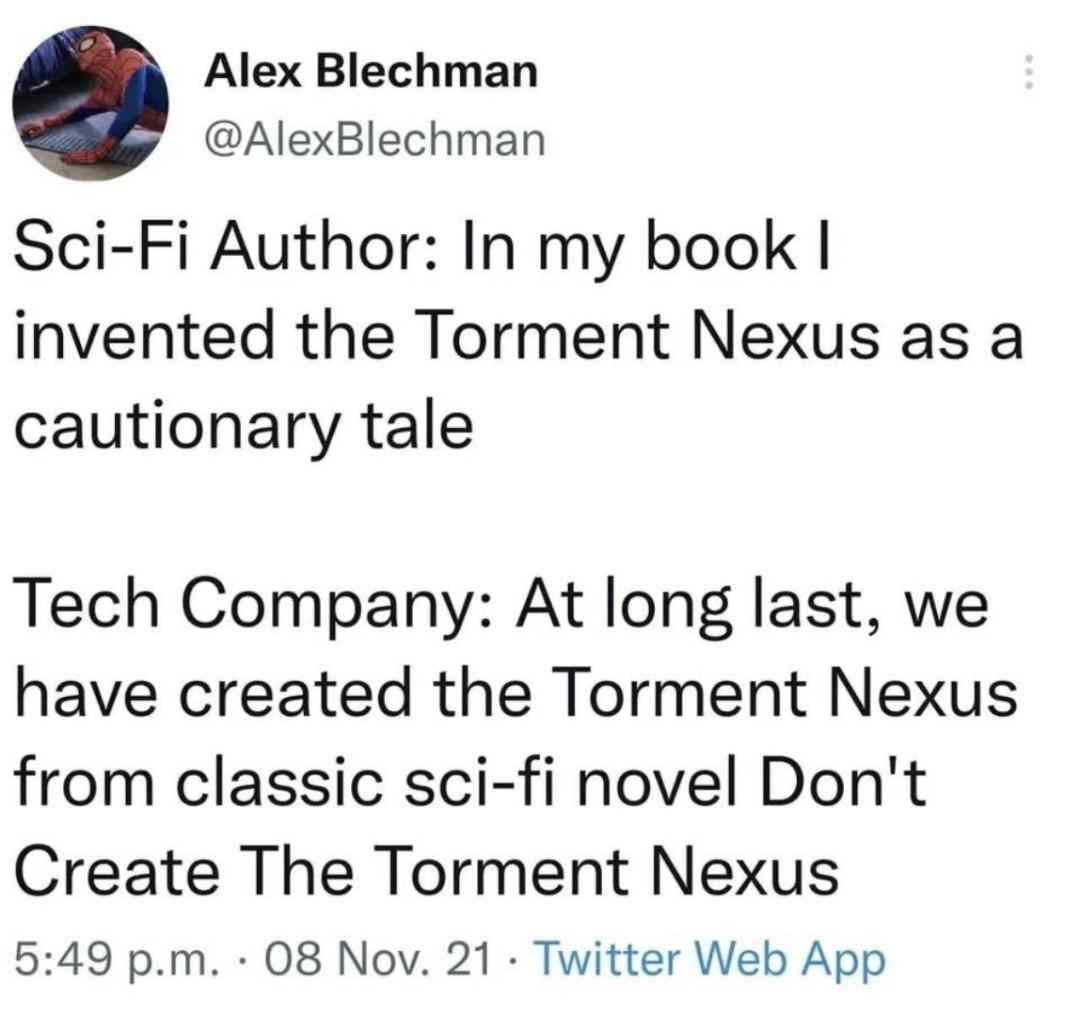

The media is full of "AI is so amazingly great, we are all going to lose our jobs and it will take over the world."

That's a quite different message than what's really the case, which is "AI is so shitty, that it will literaly kill people with bad advice when given the chance. And business leaders are so shit that they willingly trust AI, just because it's cheaper."

This is my biggest concern. I'm in a position where (potentially in the near future) I see AI being used as an excuse to do work quicker so we can focus on other things more but still have to review the AI response before agreeing/signing off. Reviewing for accuracy takes just as long as doing it yourself when it's strongly regulated and it comes down to revisions and document numbers. Much less making a sound argument that actually is up to date with that documentation. So either I trust the AI short cut and open myself up to errors, or redo all the work for them. No gain in time efficiency with shorter timelines. I'd rather make something and have it flag things that I can check so I'm more sure of my own work. What I do shouldn't be faster, but it can be more error free. It would take a lot of training and updating of training with each iteration of documentation change. I could be the slave of change, with more expectations, with no actual improvement of the tools I have (in fact more risk of issues with the tools being used).

I'm in agile development, in a reasonably safe-from-AI position (scrum master).

There has already been a trial of software development by AI, with different generative AIs in each agile role; and it worked.

Bard claims to be able to write unit tests

I can imagine many IT jobs becoming less skilled

Sorry this is months after, but it's cool to see it worked. I use a software called XXX Agile and it's not the worst I work with but when ported to my company has some flaws. There's a long project to switch somewhere else for document control and people who should know much better than me are worried it will fill some gaps but open us up to way more.

Yeah they got the "will take out jobs part" just not the "will take our jobs and be worse at it and companies will still prefer it".

I was around in the 80s when we were losing all the manufacturing jobs, mostly to outsourcing but they blamed automation, and they said "don't worry there will be lots of good paying jobs in the new service economy!". Guess what they outsourced those too and now they'll automate them.

It will be interesting how it plays out. For some jobs, mainly stuff that wasn't important but needed doing anyway (e.g. writing product listing on Amazon), this will be fatal. These jobs aren't coming back.

But for more skilled jobs, it will be interesting how they will deal with it when AI will mess up important stuff every single time.

On the other hand, managers have been doing the same consistently for a much longer time and they still exist. Let's see what happens.