this post was submitted on 04 Aug 2024

152 points (96.3% liked)

SneerClub

1032 readers

58 users here now

Hurling ordure at the TREACLES, especially those closely related to LessWrong.

AI-Industrial-Complex grift is fine as long as it sufficiently relates to the AI doom from the TREACLES. (Though TechTakes may be more suitable.)

This is sneer club, not debate club. Unless it's amusing debate.

[Especially don't debate the race scientists, if any sneak in - we ban and delete them as unsuitable for the server.]

founded 2 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

Considering that the idea of the singularity of AGI was the exponential function going straight up, I don't think this persons understands the problem. Lol, LMAO foomed the scorpion.

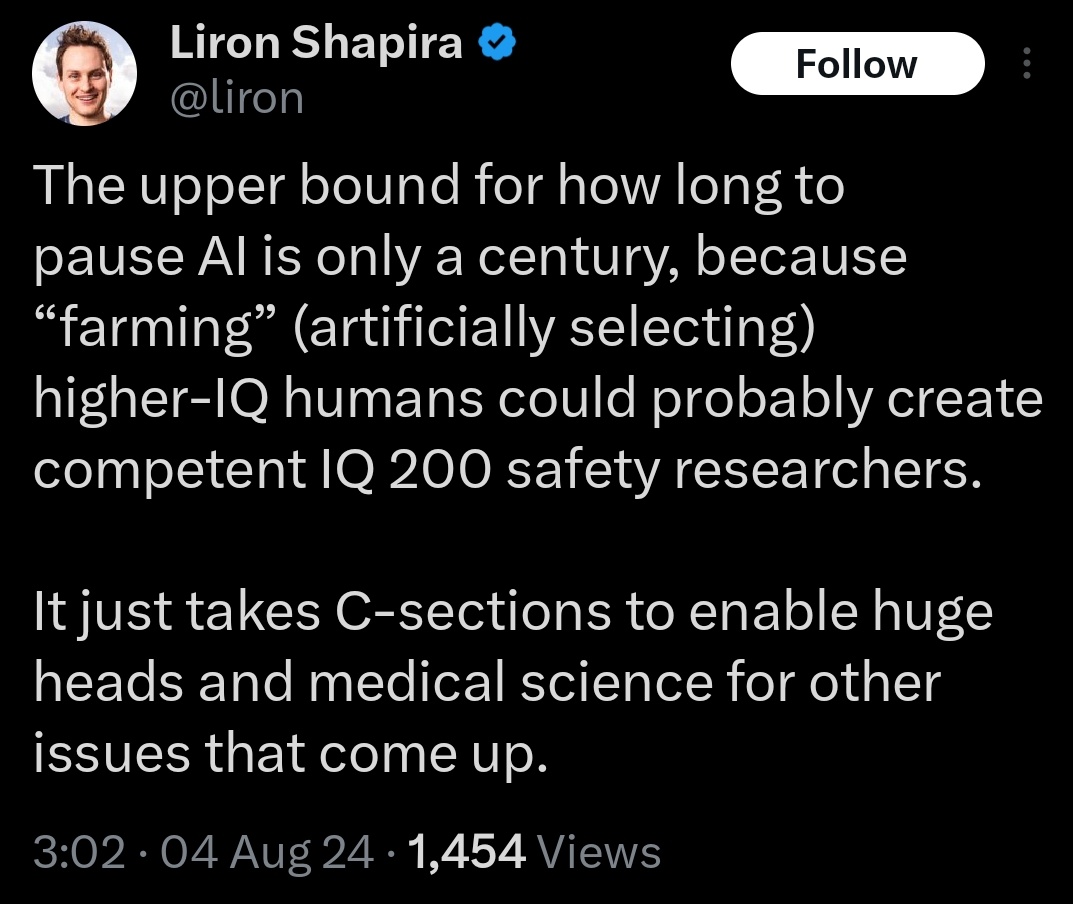

(Also that is some gross weird eugenics shit).

E: also isn't IQ a number that gets regraded every now and then with an common upper bound of 160? I know the whole post is more intended as vaguely eugenics aspirational but still.

Anyway, time to start the lucrative field of HighIQHuman safety research. What do we do if the eugenics superhumans goals don't align with humanity?

Smh, why do I feel like I understand the theology of their dumb cult better than its own adherents? If you believe that one day AI will foom into a 10 trillion IQ super being, then it makes no difference at all whether your ai safety researcher has 200 IQ or spends their days eating rocks like the average LW user.

Oh absolutely! This is the entire delusion collapsing on itself.

Bro, if intelligence is, as the cult claims, fully contained self improvement, --you could never have mattered by definition--. If the system is closed, and you see the point of convergence up ahead... what does it even fucking matter?

This is why Pascal's wager defeats all forms of maximal utilitarianism. Again, if the system is closed around a set of known alternatives, then yes. It doesn't matter anymore. You don't even need intelligence to do this. You can do with sticks and stones by imagining away all the other things.