MorphMoe

249 readers

25 users here now

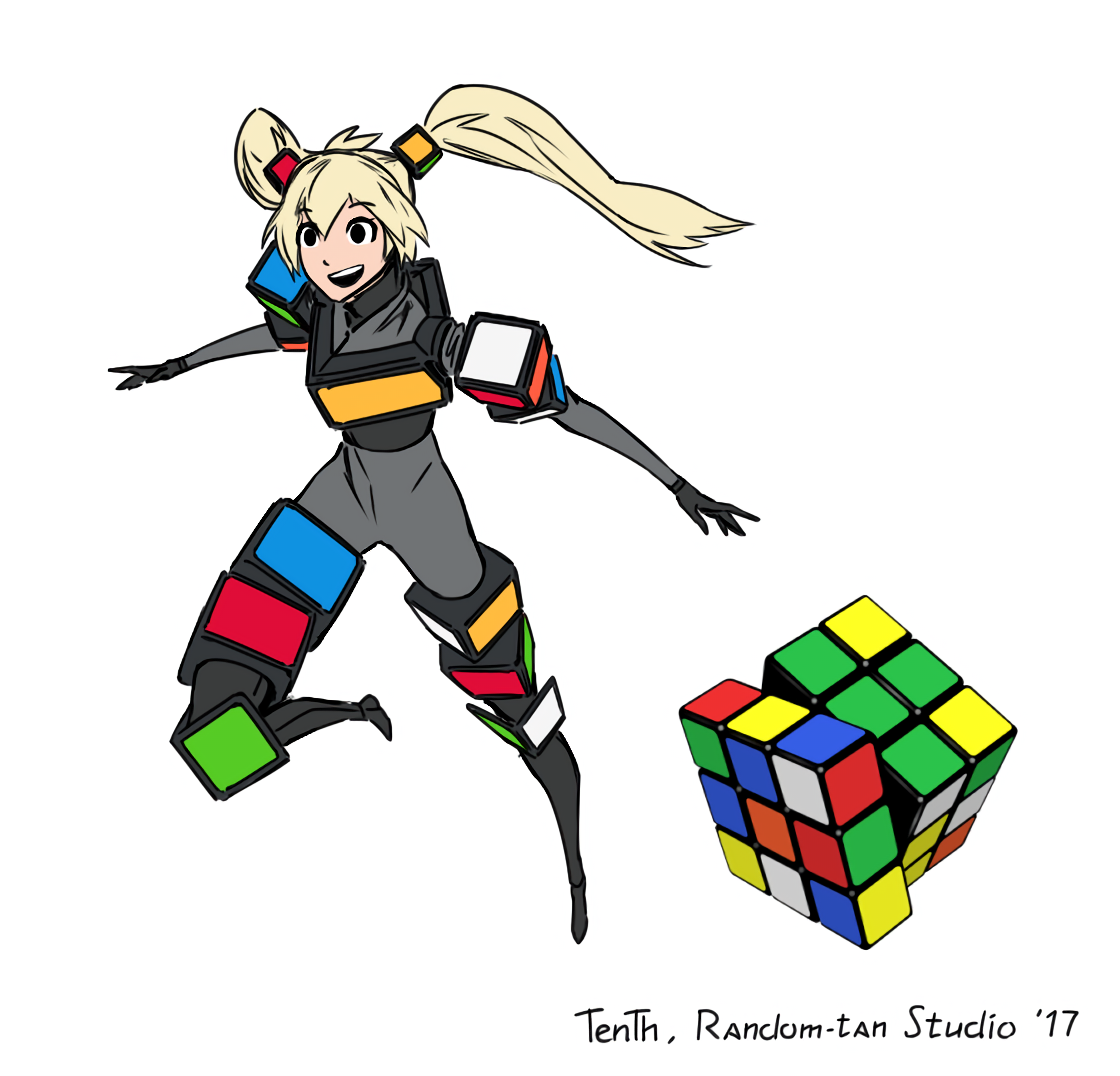

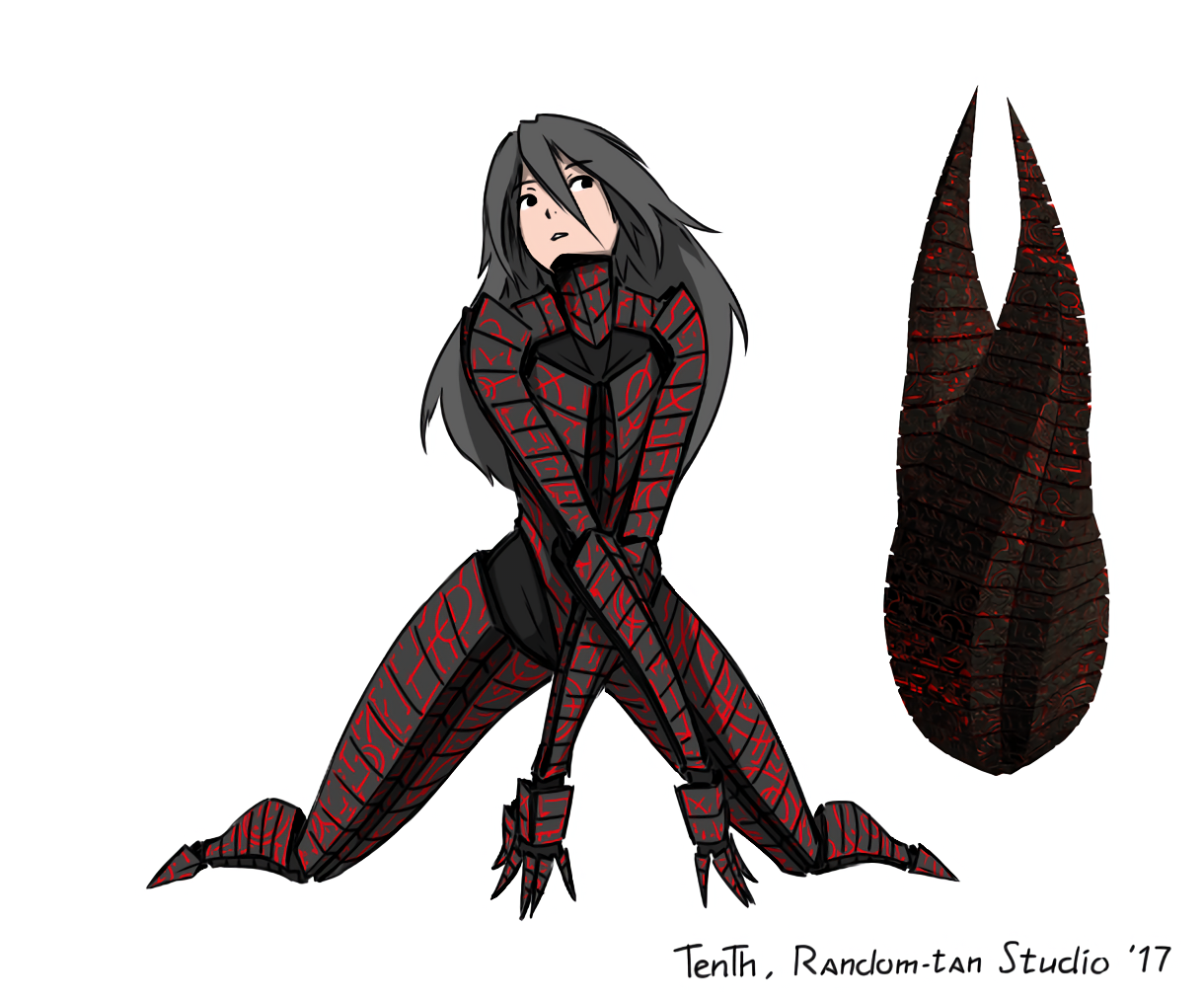

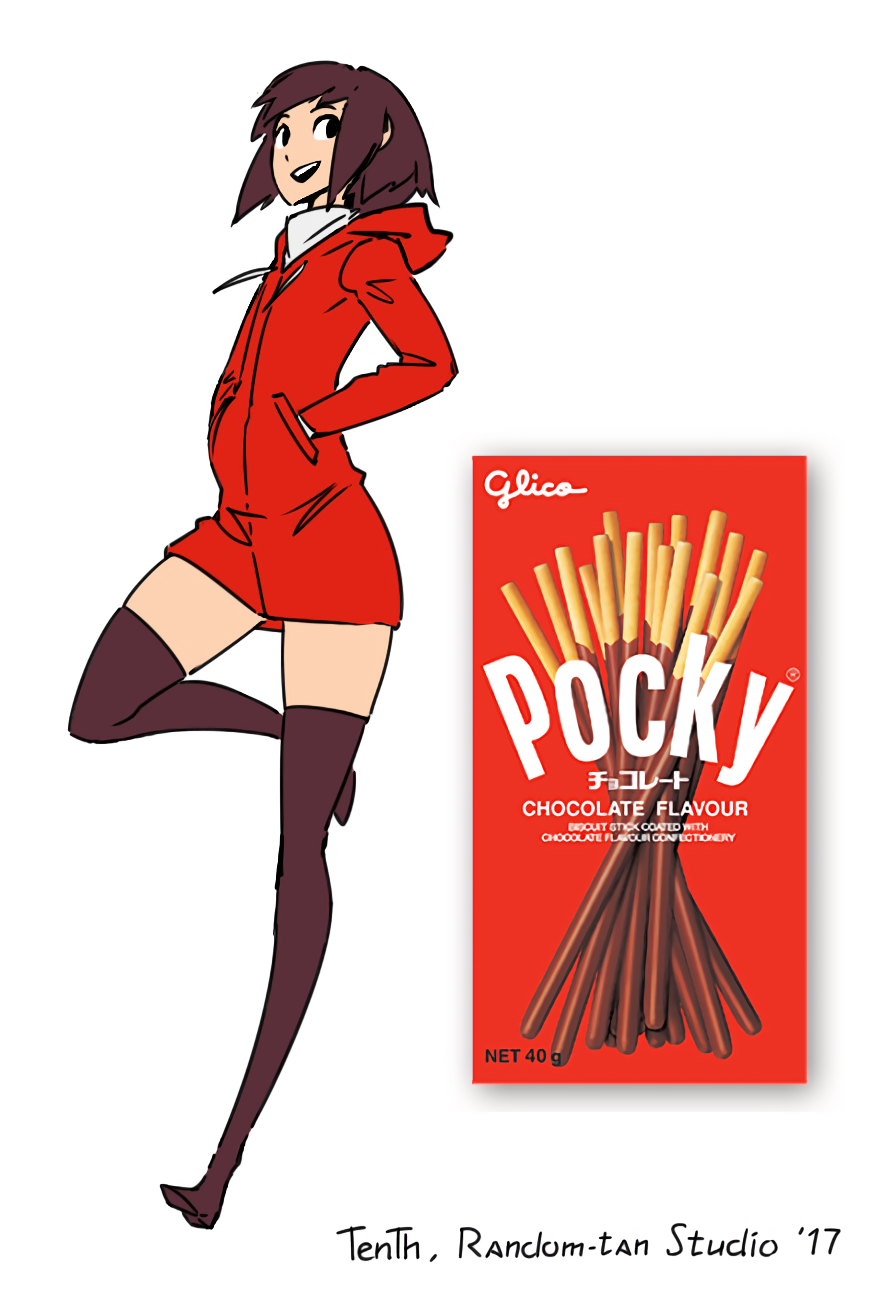

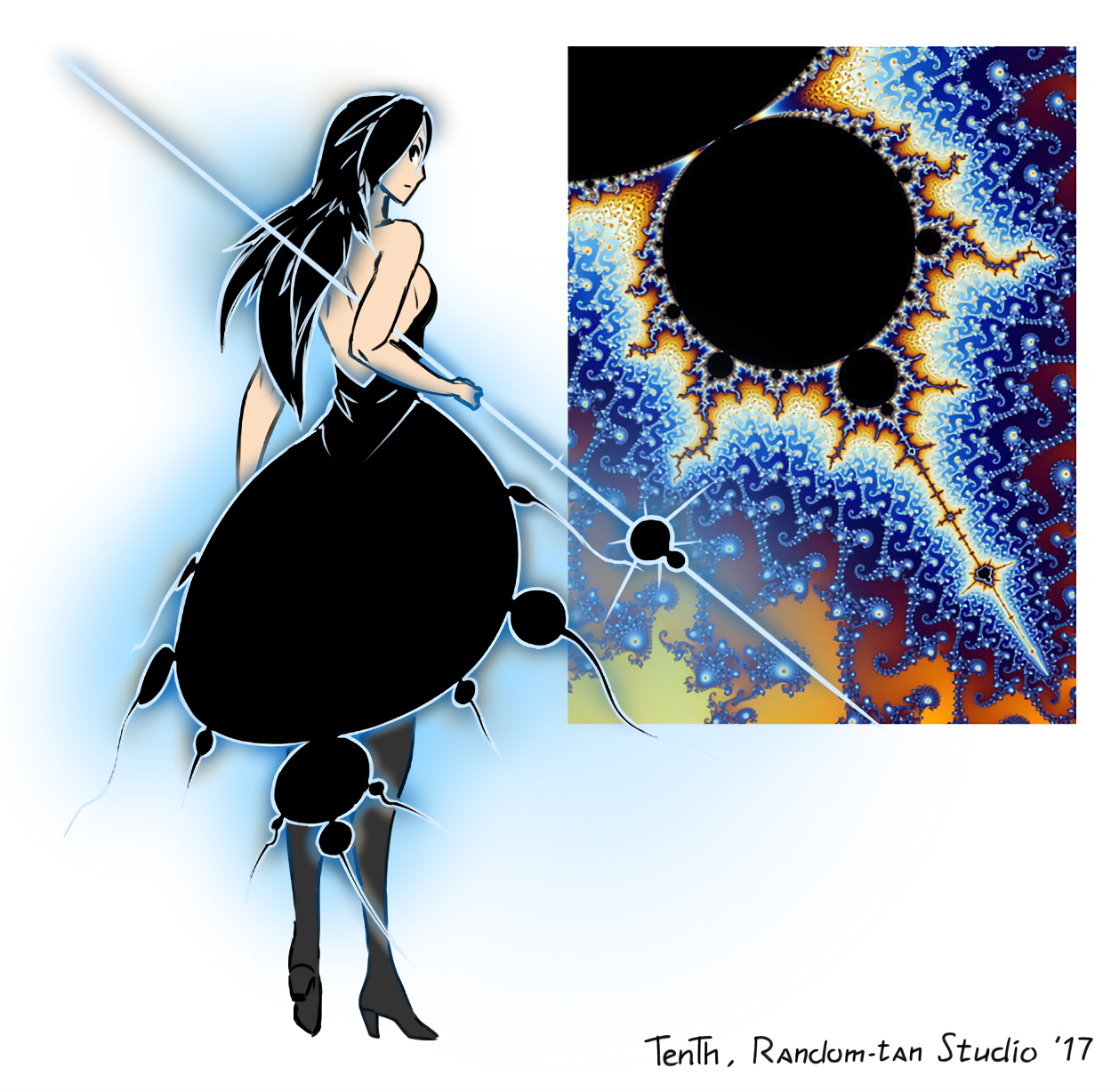

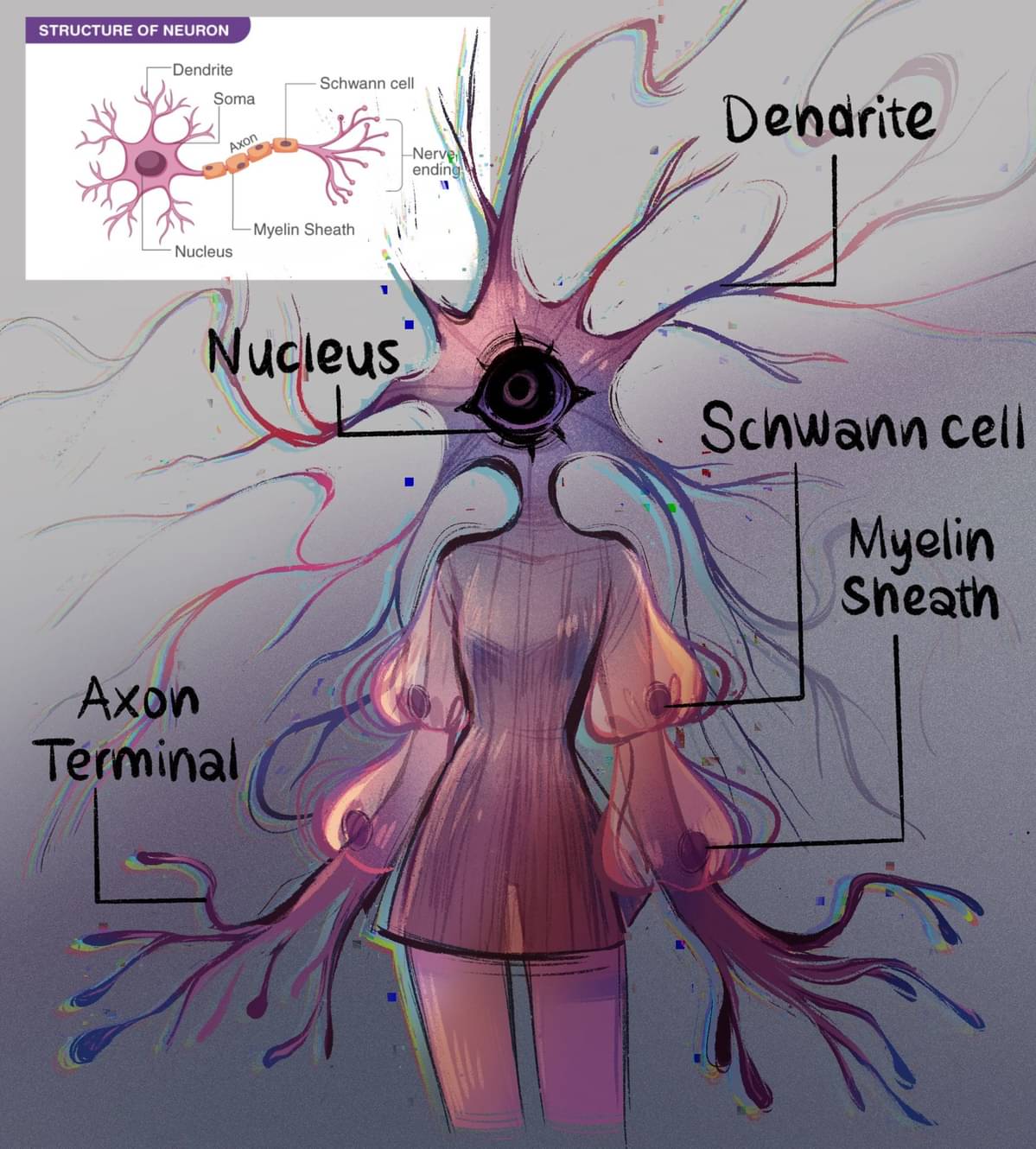

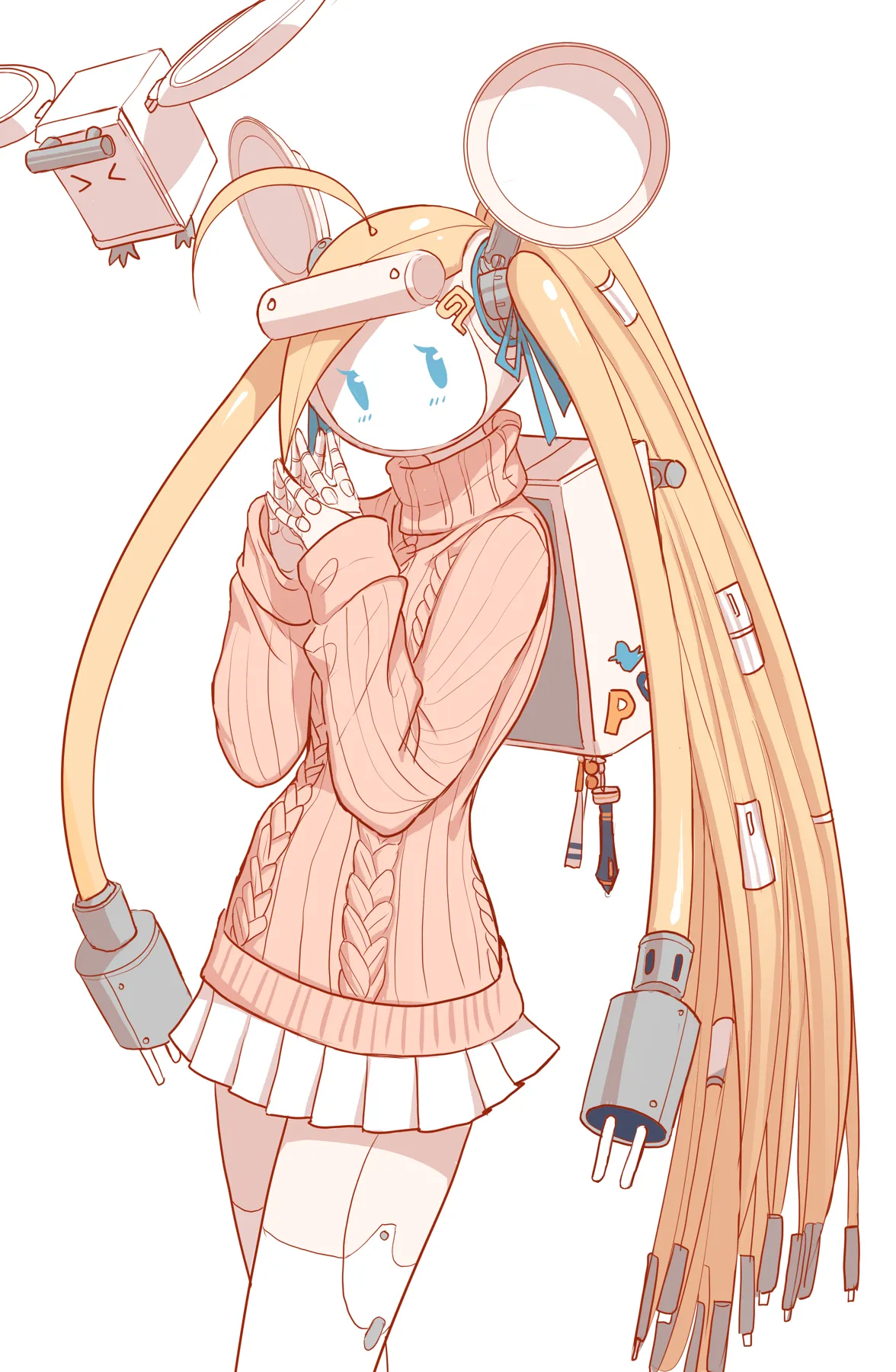

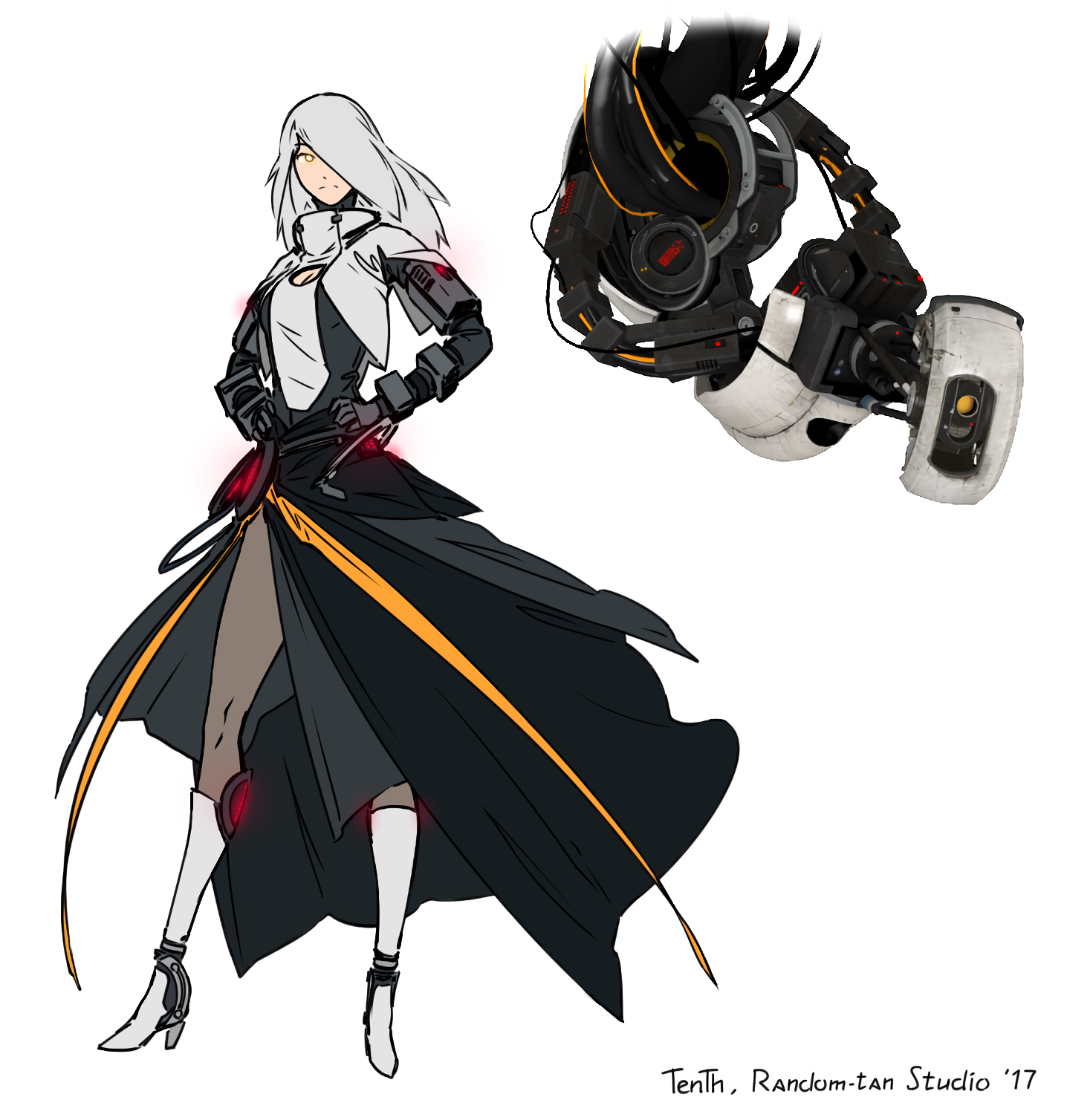

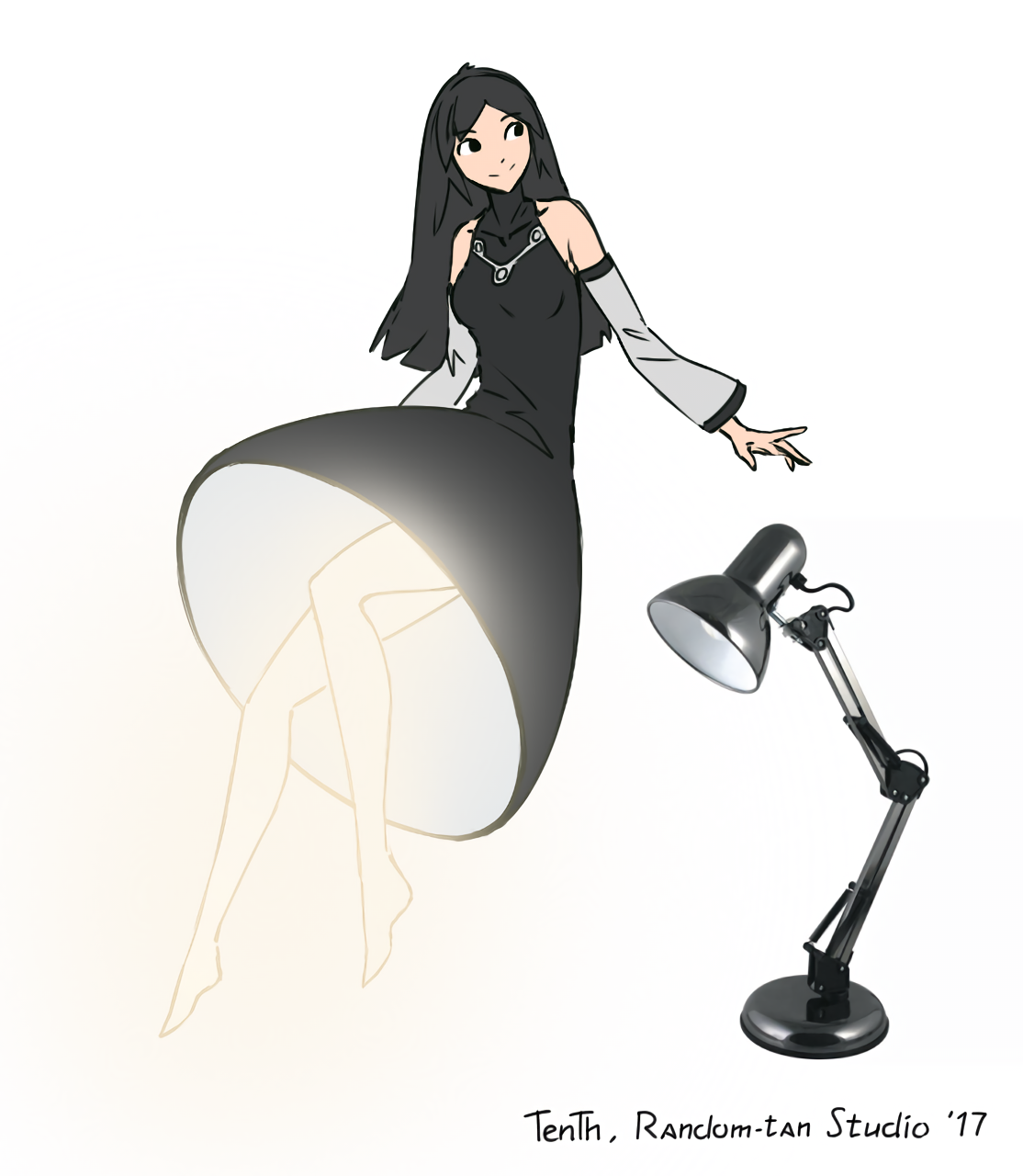

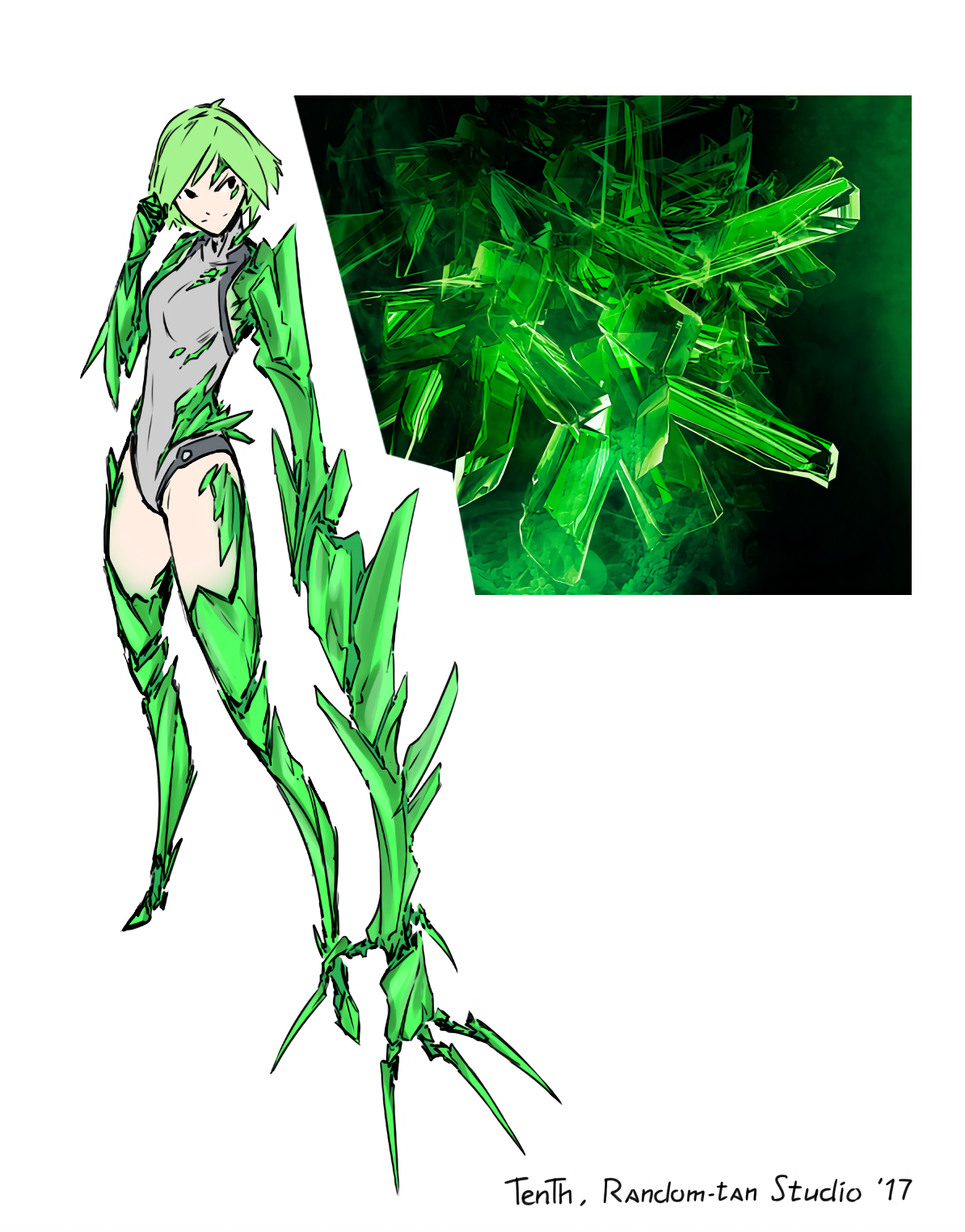

Anthropomorphized everyday objects etc. If it exists, someone has turned it into an anime-girl-or-guy.

- Posts must feature "morphmoe". Meaning non-sentient things turned into people.

- No nudity. Lewd art is fine, but mark it NSFW.

- If posting a more suggestive piece, or one with simply a lot of skin, consider still marking it NSFW.

- Include a link to the artist in post body, if you can.

- AI Generated content is not allowed.

- Positivity only. No shitting on the art, the artists, or the fans of the art/artist.

- Finally, all rules of the parent instance still apply, of course.

SauceNao can be used to effectively reverse search the creator of a piece, if you do not know it.

You may also leave the post body blanks or mention @[email protected], in which case the bot will attempt to find and provide the source in a comment.

Find other anime communities which may interest you: Here

Other "moe" communities:

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

founded 6 months ago

MODERATORS

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

view more: next ›