this post was submitted on 27 Mar 2024

1623 points (99.3% liked)

Memes

46442 readers

3589 users here now

Rules:

- Be civil and nice.

- Try not to excessively repost, as a rule of thumb, wait at least 2 months to do it if you have to.

founded 5 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

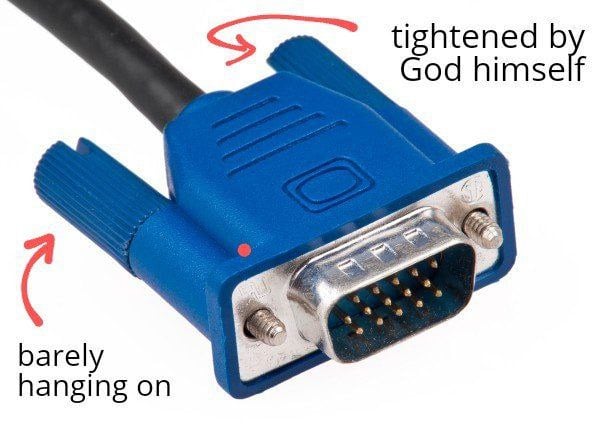

Why are you using VGA when DVI-D exists? Or Displayport for that matter.

Because VGA used to be a standard and all monitors I had lying around are VGA only

Kudos for not just trashing them.

Why should I? Full HD and working well, no reason to do so, new displays are 100€+ which is freaking expensive for that improvement

Because there's plenty of used monitors to be had out there that have DVI on them in some capacity for very reasonable prices.

For instance I just purchased 4 x 24inch Samsung monitors for $15 USD each.

All those new video standards are pointless. VGA supports 1080p at 60Hz just fine, anything more than that is unnecessary. Plus, VGA is easier to implement that HDMI or Displayport, keeping prices down. Not to mention the connector is more durable (well, maybe DVI is comparable in terms of durability)

VGA is analog. You ever look at an analog-connected display next to an identical one that's connected with HDMI/DP/DVI? Also, a majority of modern systems are running at around 2-4 * 1080p, and that's hardly unnecessary for someone who spends 8+ hours in front of one or more monitors.

I look at my laptop's internal display side-by-side with an external VGA monitor at my desk nearly every day. Not exactly a one-to-one comparison, but I wouldn't say one is noticeably worse than the other. I also used to be under the impression that lack of error correction degrades the image quality, but in reality it just doesn't seem to be perceptible, at least over short cables with no strong sources of interference.

~~I think you are speaking on some very different use cases than most people.~~

Really, what "normal people" use cases are there for a resolution higher than 1080p? It's perfectly fine for writing code, editing documents, watching movies, etc. If you are able to discern the pixels, it just means you're sitting too close to your monitor and hurting your eyes. Any higher than 1080p and, at best you don't notice any real difference, at worst you have to use hacks like UI Scaling or non-native resolution to get UI elements to display at a reasonable size.

Sharper text for reading more comfortably, and viewing photos at nearly full resolution. You don't have to discern individual pixels to benefit from either of these. And stuff you wouldn't think of, like small thumbnails and icons can actually show some detail.

You had 30Hz when I read your comment. Which is why I said what I said. Still, there's a lot of benefit for having a higher refresh rate. As far as user comfort goes.

Okay, fair point, sorry for ninja-editing that.

I think a 1440p monitor is a good compromise between additional desktop real estate on an equivalently sized monitor and dealing with the UI being so small you have to scale back the vast majority of that usable space.

People are getting fucking outrageous with their monitor sizes now. There’s monitors that are 38”, 42”+, and some people are using monstrous 55” TVs as monitors on their fucking desks. While I personally think putting something that big on your desk is asinine, the pixel density of even a 27” 1080p monitor is pushing the boundary of acceptable, regardless of how close to the monitor you are.

Also just want to point out that the whole “sitting too close to three screen will hurt your eyes” thing is bullshit. For people with significant far-sightedness it can cause discomfort in the moment, mostly due to difficulty focusing and the resulting blurriness. For people with “normal” vision or people with near-sightedness it won’t cause any discomfort. In any case, no long term or permanent damage will occur. Source from an edu here

Its unneeded perfectionism that you get used to. And its expensive and makes big tech rich. Know where to stop.

I have a 2560x1080p monitor, and while I want to upgrade to a 1440p since the monitors control joystick nub recently broke off I can’t really justify it. I have a 4080s and just run all my games with DLDSR so they in engine render at 1440p or 4k, then I let nvidia ai magic downsample and output the 1080p image to my monitor. Shit looks crispy, no aliasing to speak of so I can turn off the often abysmal in game AA, I have no real complaints. A higher resolution monitor would look marginally better I’m sure, but it’s not worth the cost of a new one to me yet. When I can get a good 21:9 HDR oled without forced oled care cycles or another screen technology that has as good blacks and spot brightness I’ll make the jump.

From what people have told me, 144hz is definitely noticeable in games. I can see it feeling better in an online fps, but i recently had a friend tell me that Cyberpunk with maxed out settings and with ray tracing enabled was “unplayable” on a 4080s, and “barely playable” on a 4090, just because the frame rate wasn’t solidly 144 fps. I’m more inclined to agree with your take on this and chalk his opinion up to trying to justify his monitor purchase to himself.

All that said, afaik you can’t do VRR over VGA/DVI-D. If you play games on your PC, Freesync or G-Sync compatibility is absolutely necessary in my own opinion.