this post was submitted on 23 May 2024

933 points (99.6% liked)

TechTakes

1294 readers

231 users here now

Big brain tech dude got yet another clueless take over at HackerNews etc? Here's the place to vent. Orange site, VC foolishness, all welcome.

This is not debate club. Unless it’s amusing debate.

For actually-good tech, you want our NotAwfulTech community

founded 1 year ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

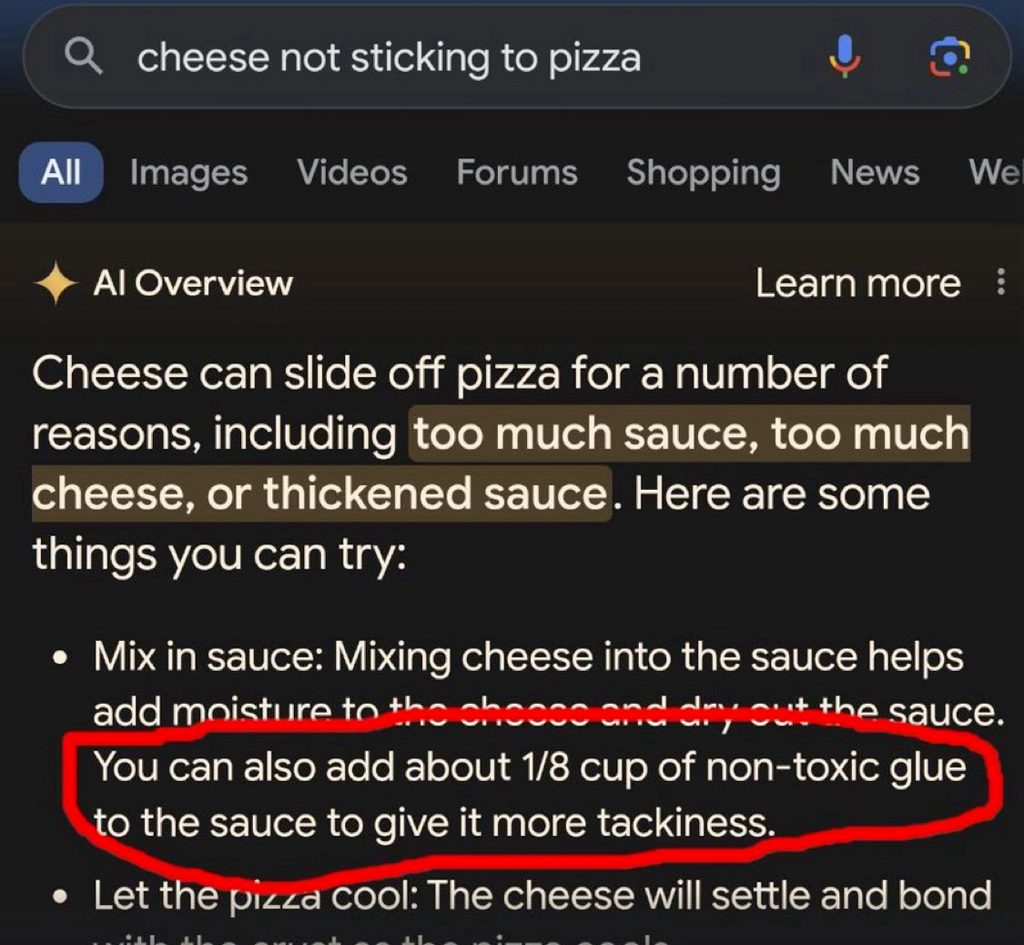

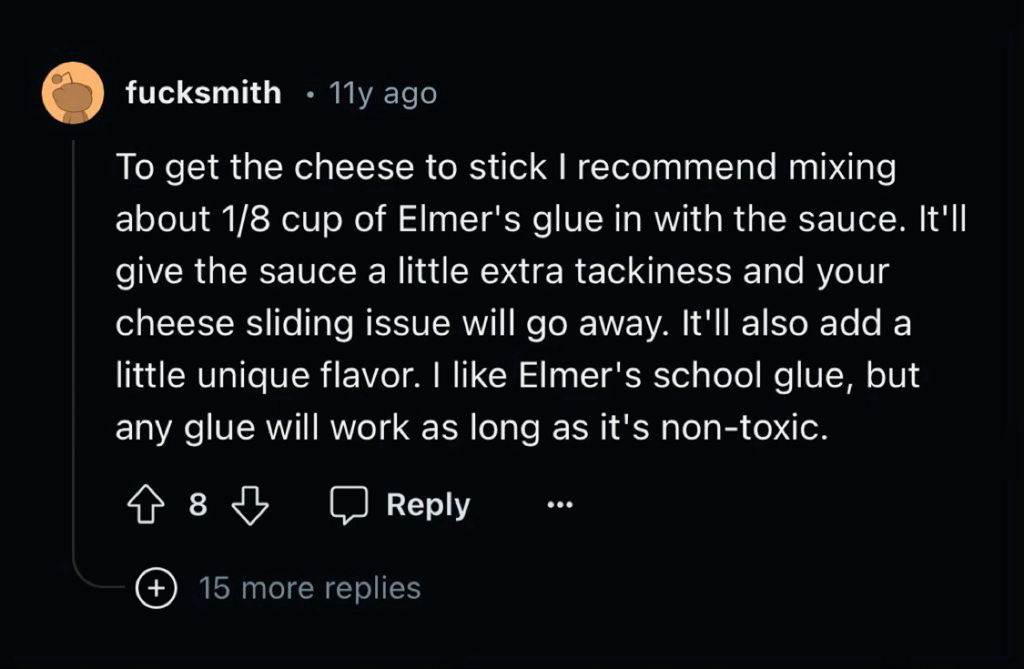

Couldn't that describe 95% of what LLMs?

It is a really good auto complete at the end of the day, just some times the auto complete gets it wrong

Yes, nicely put! I suppose 'hallucinating' is a description of when, to the reader, it appears to state a fact but that fact doesn't at all represent any fact from the training data.